Flaky tests are a common and costly pain point for teams using Playwright.

A flaky test produces inconsistent results without any code changes. One test passes, the next fails, even though nothing changed.

You can start Playwright MCP flaky test detection to eliminate this inconsistency from your test suite.

You can start Playwright MCP flaky test detection to eliminate this inconsistency from your test suite.

This inconsistency creates wasted time, developer frustration, and unreliable CI feedback.

Teams lose trust in their automation when they can't tell real bugs from false alarms.

Many of these failures come from timing issues, unstable selectors, or race conditions in modern web applications. Traditional fixes like retries or manual waits often mask the problem instead of resolving it.

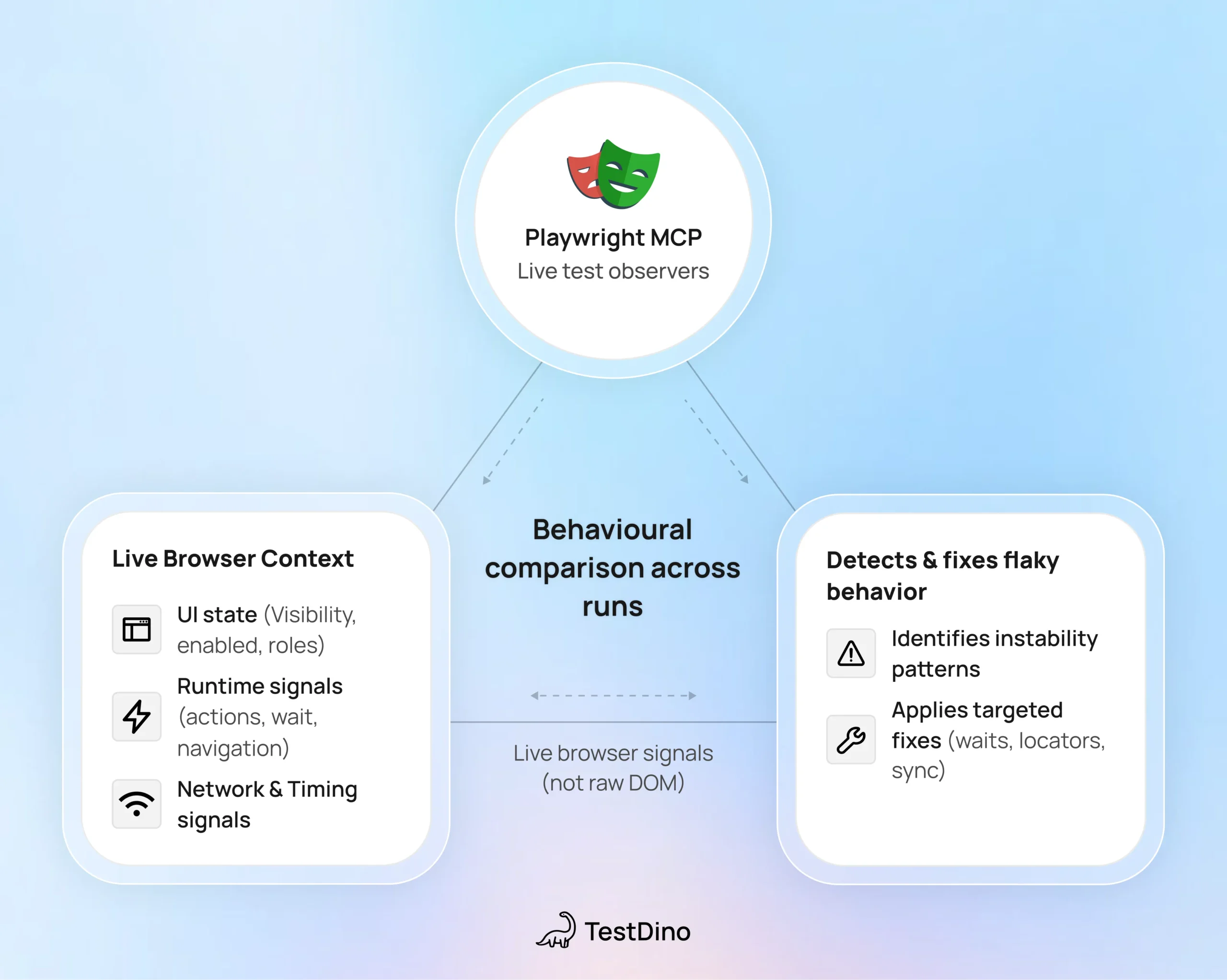

Playwright MCP provides a practical way to reduce flaky tests.

It detects unstable behavior during execution and helps to apply targeted fixes, such as smarter waits and resilient locators, and also helps teams build more stable test suites without constant confusion.

Let’s understand first what framework and technology are used behind it.