State of test automation in 2026

Discover the latest test automation trends for 2026, including AI, codeless frameworks, shift-left strategies, and more. Stay ahead in QA and DevOps.

Test automation in 2026 is no longer experimental or optional, especially as teams increasingly rely on Test Automation, modern Automation Tools, and intelligent Automation Frameworks to meet delivery demands.

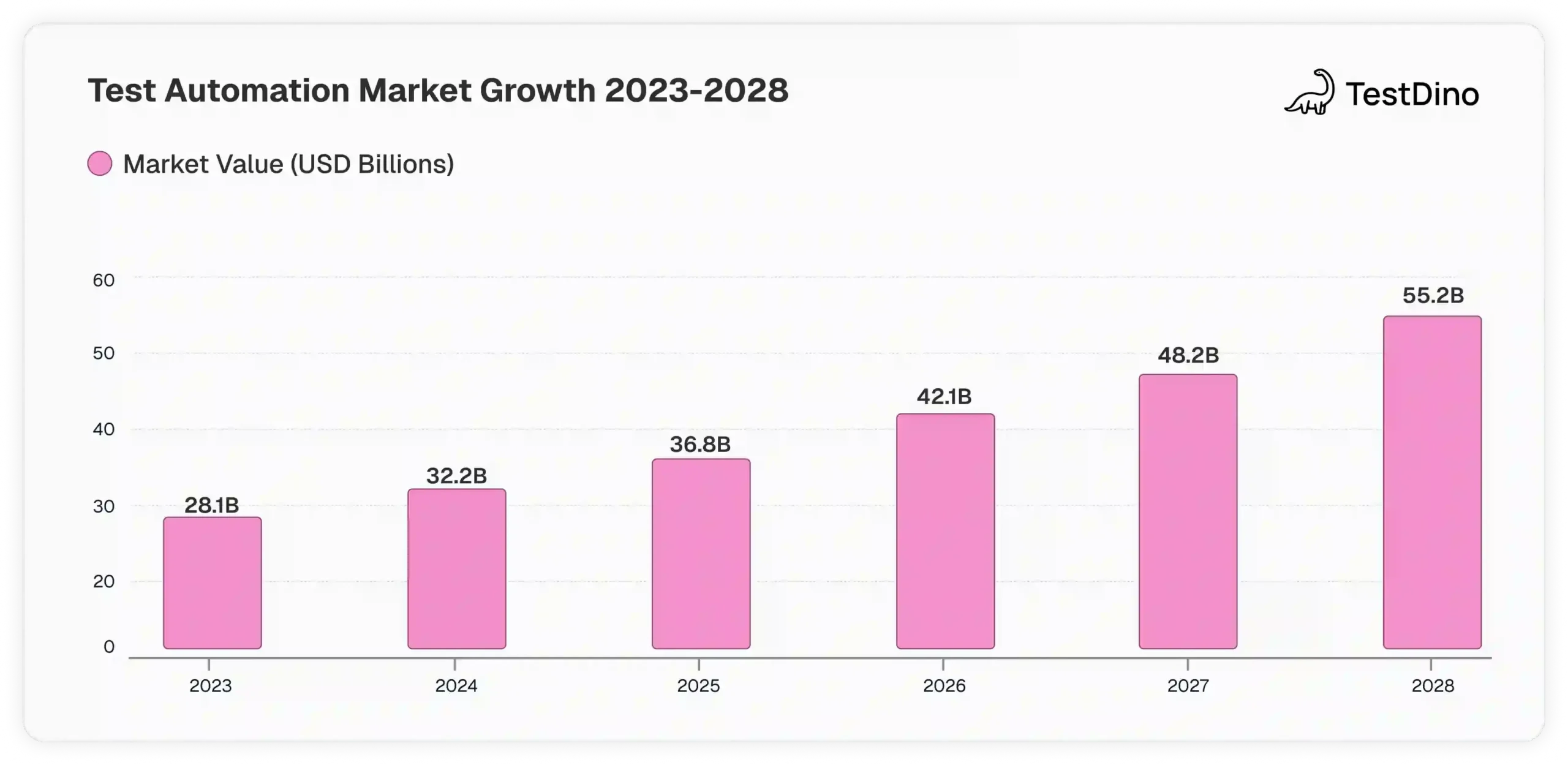

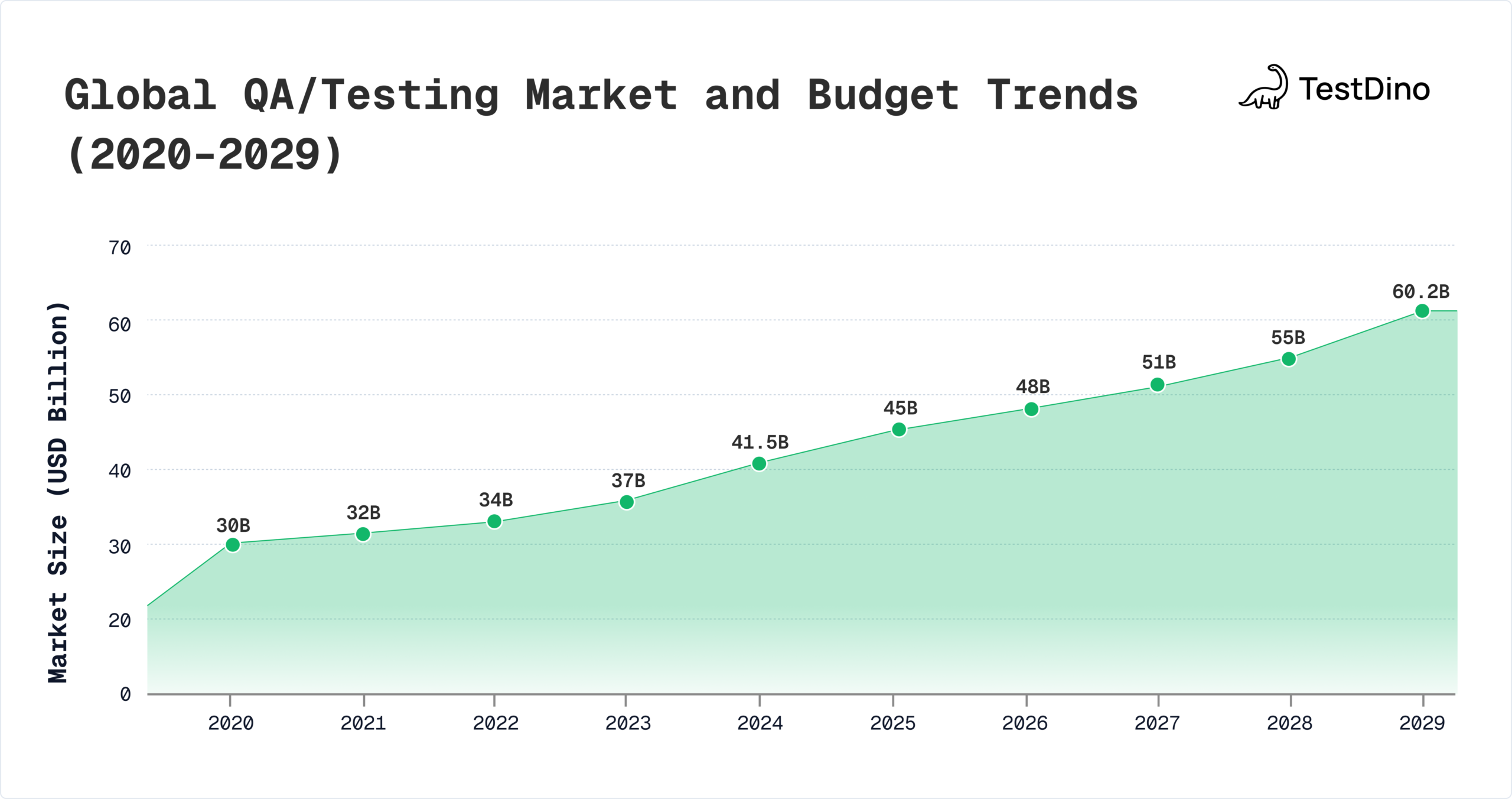

The global QA market now exceeds USD 41.5 billion and is projected to reach USD 60.2 billion by 2029, while the automation segment alone is set to nearly double from USD 28.1 billion in 2023 to USD 55.2 billion by 2028.

At the same time, adoption of AI-driven capabilities has surged, with 74% of enterprises using AI in testing according to McKinsey’s 2025 study, contributing to the rise of AI-Testing and smarter Self-healing capabilities embedded within frameworks.

This report compiles verified data from MarketsandMarkets, Gartner, McKinsey, and GitHub to highlight how automation frameworks, AI-assisted tooling, cloud execution, and DevOps integration are reshaping software quality worldwide.

Whether you are selecting frameworks like Playwright, scaling automation coverage, or planning AI-based efficiency gains, the data here provides the context needed for clear strategy and informed decisions especially for teams using Continuous Integration and CI/CD pipelines.

1. Global Market Size and Growth

The test automation market isn't just growing, it's exploding with verified projections from the industry's most respected analysts. The surge of Automation Testing combined with more advanced Automation Frameworks is accelerating adoption across all sectors.

According to MarketsandMarkets research, the automation testing market was valued at USD 28.1 billion in 2023 and is projected to reach USD 55.2 billion by 2028. That's essentially doubling in just five years.

The broader software testing market shows even more substantial figures, exceeding USD 54.68 billion in 2025, encompassing both automated and manual testing methodologies across all industries, including Regression Testing, API-Testing, and Performance Testing.

Market Drivers

Three primary factors accelerate adoption

- Digital transformation mandates requiring faster release cycles

- Cloud migration initiatives demanding scalable testing infrastructure

- AI integration capabilities reducing maintenance overhead, especially within Automation Tools

These numbers reflect real enterprise spending, not market hype. Companies are voting with their budgets, choosing automation for competitive advantage and deeper test maturity across E2E-Testing and Mobile Automation.

2. AI and Machine Learning in Testing

AI has moved from "interesting concept" to "must-have capability" in record time. The data backs this convincingly, especially with the rise of AI-Testing, intelligent predictions, and autonomous Self-healing test scripts.

McKinsey's State of AI 2025 report shows 74% of enterprises using AI in development and testing workflows, up from 41% in 2023.

Organizations implementing AI-driven testing report measurable improvements in defect detection (25–38%) and release velocity (20–30%), depending on maturity especially when integrated with QA Ops pipelines and optimized Automation Framework setups.

How AI is Changing QA Right Now

AI is reshaping modern testing in several practical ways. Test scripts can now self-heal by automatically updating when application elements change, reducing the burden of manual maintenance.

These Self-healing technologies pair well with modern Automation Frameworks used in enterprise pipelines.

In production environments, early adopters report 35–50% fewer broken tests per release simply from self-healing.

Large language models generate test cases that expand coverage without expanding execution workloads. Predictive analytics identify high-risk coverage areas and optimize Regression Testing suites, reducing redundant runs.

In controlled pilots, LLM-generated test coverage has expanded functional scenarios by 22–30% without extending execution workloads.

Predictive analytics are emerging as a valuable tool for identifying which tests are most likely to detect defects before execution, allowing teams to prioritize more effectively.

A growing number of QA teams say predictive ranking is cutting redundant suite execution by 18–25%.

In addition, intelligent test selection is significantly cutting execution time, often by 40–60%, especially in large regression environments where not all tests need to run.

These advancements demonstrate how AI-powered testing tools are transforming QA practices, enabling organizations to reduce maintenance effort by 30–45% while expanding coverage across complex scenarios, including API-Testing, Headless Testing, and dynamic E2E-Testing.

Agentic AI-powered test automation platforms like BotGauge reduce test maintenance overhead by using intelligent self-healing mechanisms that adapt tests to application changes automatically.

The Reality Check

However, despite these promising capabilities, AI testing still faces important practical constraints.

Accuracy limitations mean that human validation remains essential, since the technology is not yet reliable enough for complete autonomy even with the best Automation Tools.

In industry benchmarks, AI classification accuracy still floats between 70–85%, meaning manual oversight is required for the remaining 15–30% of cases.

Integrating AI into existing frameworks can also be complex and time-consuming.

Surveys show 58% of teams struggle with integration, and 46% report that onboarding takes longer than expected because frameworks are not standardized.

Moreover, AI systems require higher-than-expected data quality to perform effectively making TestData Management a critical practice inside mature QA teams.

In deployments with noisy data or poorly labeled failures, accuracy drops by 20–25%, negating the benefits.

For these reasons, organizations must balance the potential of AI with the realities of implementation. The technology is powerful, but it is not a magic solution.

3. Framework and Tool Selection Trends

Choosing the right test automation framework is increasingly strategic, and adoption signals across the community are stronger than ever.

Modern Automation Frameworks like Playwright, Selenium, and Cypress are central to Automation Testing strategies.

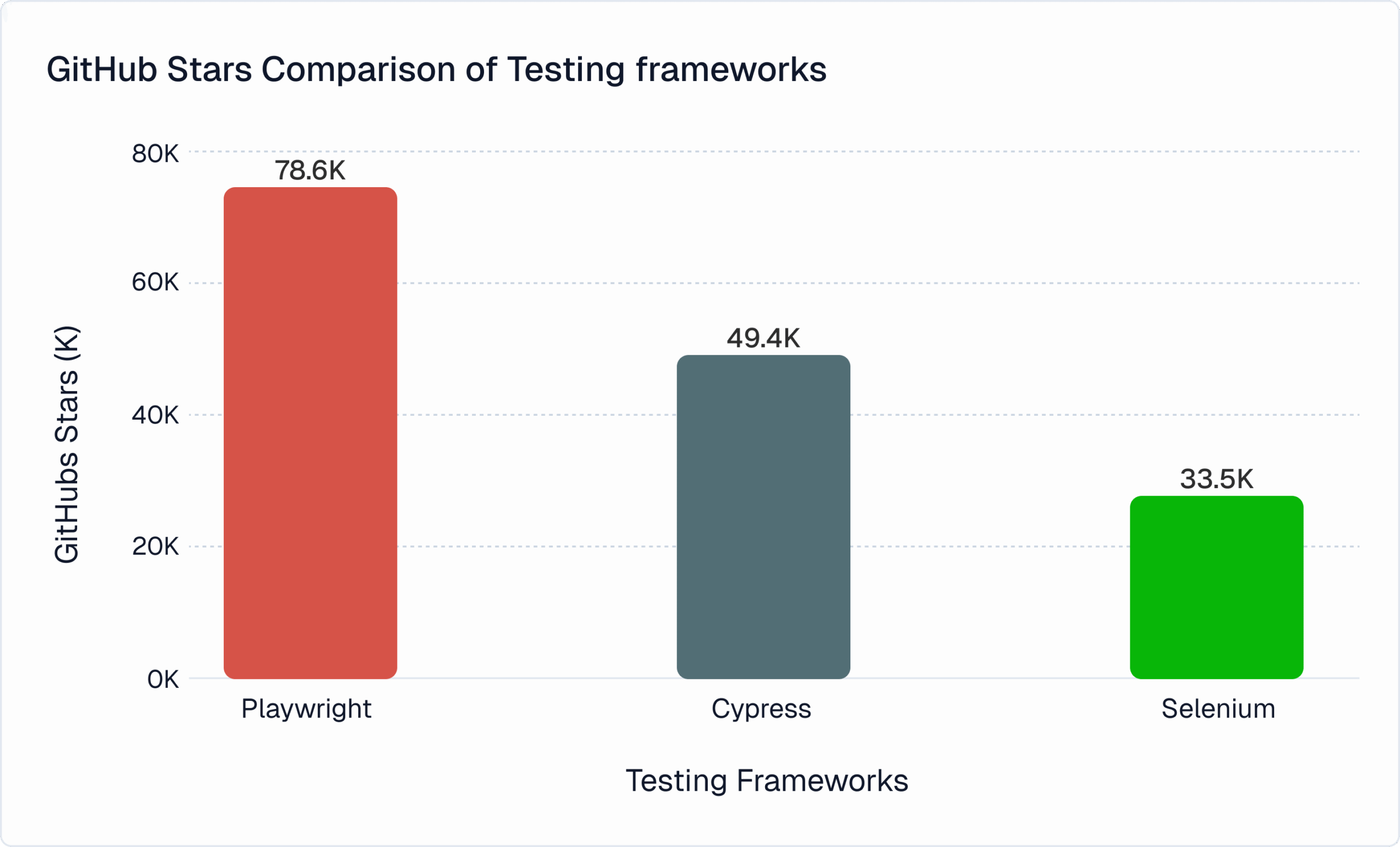

GitHub repository metrics provide concrete adoption signals. Playwright has accumulated over 79,700 stars, indicating strong developer interest and community engagement.

Framework Adoption Signals

| Framework | GitHub Stars | Primary Use Case | Key Advantage |

|---|---|---|---|

| Playwright | 80,100+ | Cross-browser testing | Modern API design |

| Selenium | 33,800+ | Web automation | Broad language support |

| Cypress | 49,500+ | JavaScript testing | Developer experience |

Beyond stars, npm download stats offer another signal

- Playwright downloads grew ~240% year-over-year

- Cypress usage saw ~130% growth

- Selenium remains steady due to large enterprise bases

Framework Selection Criteria

Organizations typically evaluate frameworks based on:

- Browser and platform coverage

- Language compatibility with internal dev teams

- CI pipeline integration and execution performance

- Ecosystem maturity, including plugins and community support

Surveys show 62% of teams prioritize tooling that works cleanly with CI/CD, and 58% want strong ecosystem maturity rather than a single standalone feature set.

The right choice depends on specific requirements; there is no universal winner.

Modern Tool Ecosystem

Modern testing is not about picking one tool, but building an ecosystem. Teams rely on:

- Distributed execution platforms for scale

- Reporting and analytics for triage and debugging

- Test data management for consistent environments

- Monitoring and observability to validate system behavior

Recent studies indicate 71% of enterprises now use at least three specialized tools alongside their main automation framework.

That reflects what testing looks like today: coordinated, multi-layered, and deeply integrated with CI workflows.

4. Codeless Testing Platforms

Codeless testing platforms represent one of the fastest-growing segments, solving the shortage of skilled automation engineers.

According to Future Market Insights analysis, the codeless testing market was estimated at USD 2.7 billion in 2025 and is projected to reach USD 11.4 billion by 2035, reflecting approximately 15.6% CAGR.

Codeless Capabilities

Modern tools offer visual test builders, drag-and-drop flows, record-and-playback enhanced with element recognition, and even natural-language test definitions.

They integrate directly with CI/CD pipelines, support cross-platform testing, and often remove the need for maintaining separate codebases.

Target Users

Because of their simplicity, codeless platforms appeal to business analysts, manual testers transitioning into automation, small teams without dedicated automation engineers, or teams needing rapid prototyping.

But most orgs use codeless tools alongside code-based frameworks complex flows still require scripted tests.

This shift mirrors the broader expansion in test automation: the global automation testing market overall is forecast to grow from USD 28.1 billion in 2023 to USD 55.2 billion by 2028 (CAGR ~14.5%).

5. Enterprise Strategies and DevOps Integration

Enterprises continue to invest in automation, but execution gaps remain.

One study found 73% of test automation projects fail to deliver expected ROI, and 68% are abandoned within 18 months.

A major driver is that many teams still rely heavily on manual work. Katalon's 2025 survey shows 82% of testers use manual execution daily, and only ~45% automate regression.

The Coverage Gap

Many enterprises automate less than half of their test cases despite adopting modern tools, and this disconnect arises from several underlying challenges.

Technical debt often requires substantial refactoring before automation can be effective, while the ongoing maintenance burden can consume more than 20% of team resources.

Skills constraints also limit the ability to implement more advanced automation practices, and the complexity of achieving coverage in dynamic applications further slows progress.

According to Gartner Research, by 2026, 30% of enterprises are expected to automate more than half of their network activities, highlighting a broader push toward deeper automation across the industry.

Shift-Left Testing Impact

Shift-left testing simply means testing earlier instead of waiting until the last stage. You catch issues when they're small, not when they've spread into the full system.

It saves time because the team doesn't have to unwind large chunks of work later.

Teams using shift-left report clear gains. Middleware research shows they see up to 40% fewer post-release bugs, and fixing problems early avoids the 15–30× higher cost of fixing them in production.

Shift-left also speeds delivery. When devs and QA collaborate earlier, merges go smoother, feedback loops shrink, and releases move faster.

DevOps and CI/CD Integration

Key integration points include

- Pre-commit testing catching issues before merge

- Pull request validation ensuring quality standards

- Staging verification before production

- Production monitoring with synthetic testing

Advanced organizations increasingly rely on real-time analytics to enhance the efficiency and reliability of their testing efforts.

These capabilities support test suite performance trending, help identify flaky tests, reveal coverage gaps, and enable optimization of overall execution time.

By using data-driven insights, teams can continuously refine their testing strategy and improve quality outcomes.

6. Security and Cloud Testing

Security testing automation has become a major priority as release cadences accelerate and cloud becomes central.

The global security testing market is projected to grow from USD 14.5 B in 2024 to 43.9 B by 2029 (CAGR ~24.7%).

Similarly, the broader application security market covering SAST, DAST, SCA, and cloud-security tools reached USD 13.64 B in 2025, with a forecast of USD 30.41 B by 2030.

Security Testing Automation

Organizations increasingly automate security testing for rapid release cadences

- SAST - Analyzing source code for vulnerabilities

- DAST - Testing running applications

- SCA - Identifying vulnerable dependencies

- IaC scanning - Validating cloud configurations

Security Testing Methods

| Method | Automation Level | Primary Use | Frequency |

|---|---|---|---|

| SAST | High | Code vulnerability detection | Every commit |

| DAST | Medium | Runtime security testing | Daily/weekly |

| SCA | High | Dependency scanning | Every build |

| Penetration Testing | Low | Security audit | Quarterly |

Cloud Testing Infrastructure

Cloud testing continues to grow rapidly, driven by speed and scalability.

The global cloud-testing market is projected to reach USD 13.7 billion by 2032, up from USD 8.5 billion in 2024, at a CAGR of around 6%.

This growth reflects the clear benefits seen in practice.

Cloud-based testing offers compelling advantages

- Elastic scalability matching test execution to demand (widely cited as cutting infrastructure wait time by 60–80%)

- Geographic distribution for latency testing

- Cost optimization through usage-based pricing (average infrastructure savings of 30–40% compared to static hardware)

- Rapid provisioning in minutes rather than days

Modern test infrastructure builds on this through infrastructure as code (IaC).

Industry surveys show over 80% of cloud-native teams now use IaC tools like Terraform, ensuring consistent setups across regions and environments.

Versioned environment definitions provide reproducibility, automated provisioning reduces idle resource costs, and CI/CD-driven environment creation supports on-demand spin-up and teardown.

Together, these practices make cloud testing both scalable and operationally efficient, while improving reliability and reducing manual infrastructure effort.

7. Investment and Budget Trends

QA budgets reflect how seriously organizations take quality and automation.

The global QA/testing market is already over USD 41.5 billion (2024) and is projected to hit USD 60.2 billion by 2029.

Companies typically allocate around 15–25% of their development spend to QA: licensing automation platforms, cloud infrastructure, training, and consulting services showing that quality initiatives are a strategic priority.

In firms moving to cloud testing infrastructure, many report 30–40% savings on test environment costs compared with traditional on-prem setups.

This frees the budget for training and tool investments.

As organizations shift to modern automation, training budgets in QA teams have risen by roughly 20% year-over-year, a clear signal that workforce readiness is now as important as tooling in quality strategy.

ROI Factors

Organizations evaluate investments based on

- Reduced manual testing effort and labor costs (automation reduces manual effort by ~30–50% on average)

- Faster release cycles enabling earlier revenue (teams report 10–25% faster delivery with reliable automation)

- Improved quality reducing production defects (post-release bug fixes can cost 15–30× more than early fixes)

- Better coverage across platforms (cloud and cross-browser setups expand reach without extra staffing)

However, achieving positive ROI requires realistic expectations. Most organizations see ROI within 12-18 months.

Venture Investment

Venture investment in QA is increasingly concentrated in areas set for rapid growth.

Recent funding data shows that AI-driven testing and automation tooling has attracted significant capital, with multiple rounds exceeding $40–100M in the last 24 months across platforms focused on observability, analytics, and intelligent test generation.

Key focus areas include AI-driven testing platforms, codeless automation solutions, testing observability and analytics, and specialized testing models for cloud-native and distributed architectures.

Investment tracking reports show that AI-based QA and test analytics have seen YoY funding increase above 30%, reflecting strong market confidence and expectations of long-term efficiency gains.

These investments reflect market belief that emerging platforms will reduce manual effort, improve quality metrics, and address the challenges of complex, highly dynamic modern software ecosystems.

8. Future Predictions

Near-Term (2026-2027)

AI-driven testing will expand steadily. Based on current adoption trajectory, platforms using AI for defect detection and test analysis are expected to grow at around 20–25% annually, pushing more teams toward self-healing automation to cut maintenance cycles.

Using container-based environments in testing will continue rising because development has already shifted this way.

Today, over 70% of cloud-native teams use Docker-based workflows, and this trend is expected to flow further into QA environments for consistency.

Observability will tighten the link between testing and production. As observability tooling grows at 11–13% CAGR, teams will increasingly surface production metrics in QA dashboards to validate real-world performance early.

Long-Term Trajectory

Test automation trends suggest a gradual shift toward

- Autonomous testing systems with minimal intervention

- Predictive quality models identifying risks early

- Natural language interfaces for non-technical users

- Integrated quality platforms unifying tools

Automation Maturity Timeline

| Timeframe | Capability Level | Key Characteristics |

|---|---|---|

| Current | Structured automation | Framework-based scripted tests |

| 2026-2027 | Intelligent automation | AI-assisted test generation |

| 2028+ | Autonomous testing | Self-managing systems |

Strategic Recommendations

Organizations should

- Set clear objectives before buying tools, since surveys show 73% of automation efforts miss ROI when goals are vague.

- Invest in team skills alongside tooling, with QA training budgets growing about 20 percent year over year.

- Roll out automation gradually, because most teams realize ROI in 12 to 18 months, not instantly.

- Keep AI expectations realistic, as AI testing still needs human oversight.

- Build flexible architectures, since the automation market is growing at about 8 to 9 percent CAGR and tools will keep evolving.

The future of test automation lies in augmenting human expertise through intelligent tools, not replacing it.

Conclusion

The state of test automation in 2026 is shaped by strong market growth and measurable value, not just hype.

Global spending already exceeds USD 41.5 billion and is projected to reach USD 60.2 billion by 2029, while specific segments like AI-driven tooling are growing 20 to 25 percent annually.

Adoption is wide, but the biggest gains come when teams combine tools with strategy.

The data across frameworks, AI, cloud testing, security automation, and DevOps integration shows the same pattern.

Organizations that plan realistically, improve team capability, and invest in reporting and analytics reduce manual work by 30 to 50 percent, accelerate release cycles by 10 to 25 percent, and avoid the 15 to 30 times higher cost of fixing issues in production.

The outlook toward 2027 and beyond is promising. Intelligent automation, predictive prioritization, and unified quality platforms will expand, but they will augment human expertise rather than replace it.

The teams that treat automation as continuous evolution, guided by data rather than assumptions, will lead in quality, delivery speed, and engineering efficiency.

FAQs

Table of content

Flaky tests killing your velocity?

TestDino auto-detects flakiness, categorizes root causes, tracks patterns over time.