Performance Benchmarks of Playwright, Cypress, and Selenium in 2026

Playwright is the fastest and most resource-efficient E2E framework in 2026, outperforming Cypress and Selenium in speed, parallelism, and CI cost. Here’s how they compare.

Every testing framework claims to be fast.

But when your CI pipeline takes 90 minutes, and half the failures are flaky, claims don't matter. Numbers do.

Playwright, Cypress, and Selenium are the three frameworks that dominate E2E testing in 2026.

The performance gap between these frameworks is real and measurable. It shows up in CI build times, cloud infrastructure costs, developer wait times, and how often your team reruns a "failed" test that was never actually broken.

But raw speed is only part of the picture. Execution consistency, flakiness rates, memory consumption, parallel execution throughput, and CI cost all matter when choosing between these three frameworks. A framework that's 20% faster but twice as flaky doesn't save you anything.

This article collects every credible, reproducible benchmark we could find and lets the numbers speak. For a broader look at framework adoption trends beyond performance, see the linked guide.

Benchmark 1: Raw Execution Speed

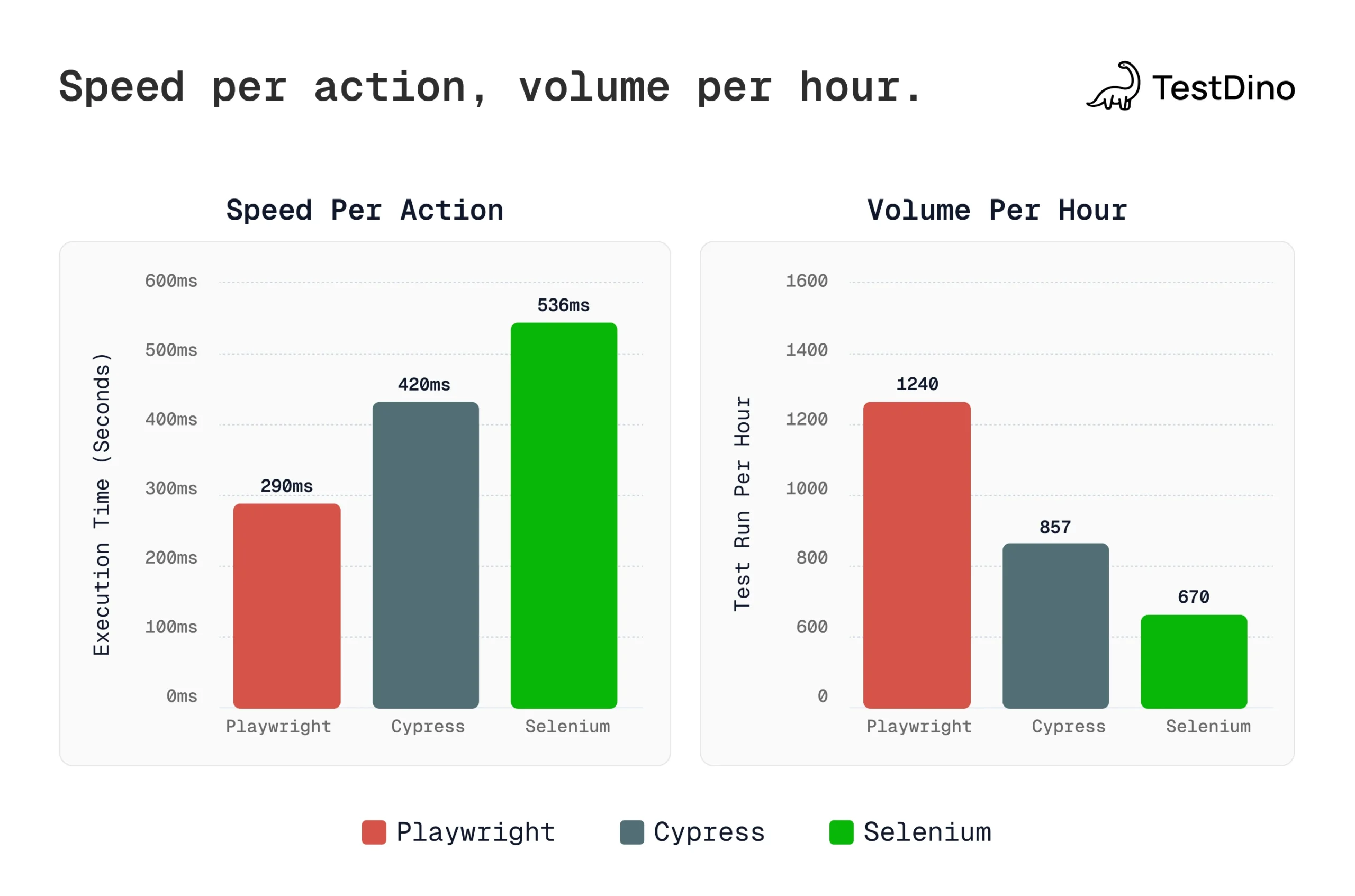

The most cited data comes from the Axelerant study, which ran identical test scenarios across all three frameworks:

| Framework | Execution Time (single test) | Per-Action Average | Relative Speed |

|---|---|---|---|

| Playwright | 4.657s | 290ms | Fastest (baseline) |

| Cypress | 5.000s | 420ms | ~1.45x slower per action |

| Selenium | 9.547s | 536ms | ~1.85x slower per action |

In throughput terms, that translates to roughly:

-

Playwright: ~1,240 tests/hour

-

Cypress: ~857 tests/hour

-

Selenium: ~670 tests/hour

The Checkly benchmark (1,000 runs against a production web app) confirmed the pattern:

-

Playwright: Fastest in real-world E2E scenarios, with the lowest execution time variability

-

Cypress: ~23% slower than Playwright in production tests, but the gap narrows for suites vs single tests

-

Selenium (via WebDriverIO): Slowest, with the highest execution time variability

Tip: The gap between Cypress and Playwright narrows for suite execution vs single tests. In Checkly's three-test suite scenario, Cypress was only ~3% slower than Selenium but still 23% behind Playwright. This is because Cypress adds ~5-7 seconds of startup latency before any test logic begins, which amortizes across longer suites.

Benchmark 2: Scaling to 100 Tests

Single-test benchmarks can be misleading. What matters in CI is how frameworks perform at scale. The ray.run benchmark tested this by running the same test 1x, 10x, and 100x:

Test 1 (single run), relative to fastest:

| Tool | Relative Time |

|---|---|

| @playwright/test | 100% (baseline) |

| Playwright (library) | 144% |

| Puppeteer | 164% |

| Selenium | 166% |

| Cypress | 350% |

Test 2 (10 runs), relative to fastest:

| Tool | Relative Time |

|---|---|

| Playwright | 100% (baseline) |

| @playwright/test | 106% |

| Puppeteer | 114% |

| Selenium | 134% |

| Cypress | 142% |

Test 3 (100 runs), relative to fastest:

| Tool | Relative Time |

|---|---|

| Playwright | 100% (baseline) |

| @playwright/test | 108% |

| Puppeteer | 115% |

| Cypress | 126% |

| Selenium | 149% |

Two patterns stand out:

-

Playwright consistently wins at scale, and the gap grows as the test count increases

-

Cypress improves dramatically from 350% to 126% as its startup cost amortizes

-

Selenium gets relatively worse at scale (from 166% to 149%, but still behind)

Tip: If you're evaluating frameworks with a 5-test proof-of-concept, Cypress's startup penalty will make it look disproportionately slow. Run at least 50+ tests in your evaluation to get realistic numbers. For Playwright sharding strategies that maximize parallelism, see the linked guide.

Tip: If you're evaluating frameworks with a 5-test proof-of-concept, Cypress's startup penalty will make it look disproportionately slow. Run at least 50+ tests in your evaluation to get realistic numbers. For Playwright sharding strategies that maximize parallelism, see the linked guide.

Benchmark 3: Resource Usage (CPU and Memory)

This is where the differences get expensive. Framework architecture directly determines how much hardware you need.

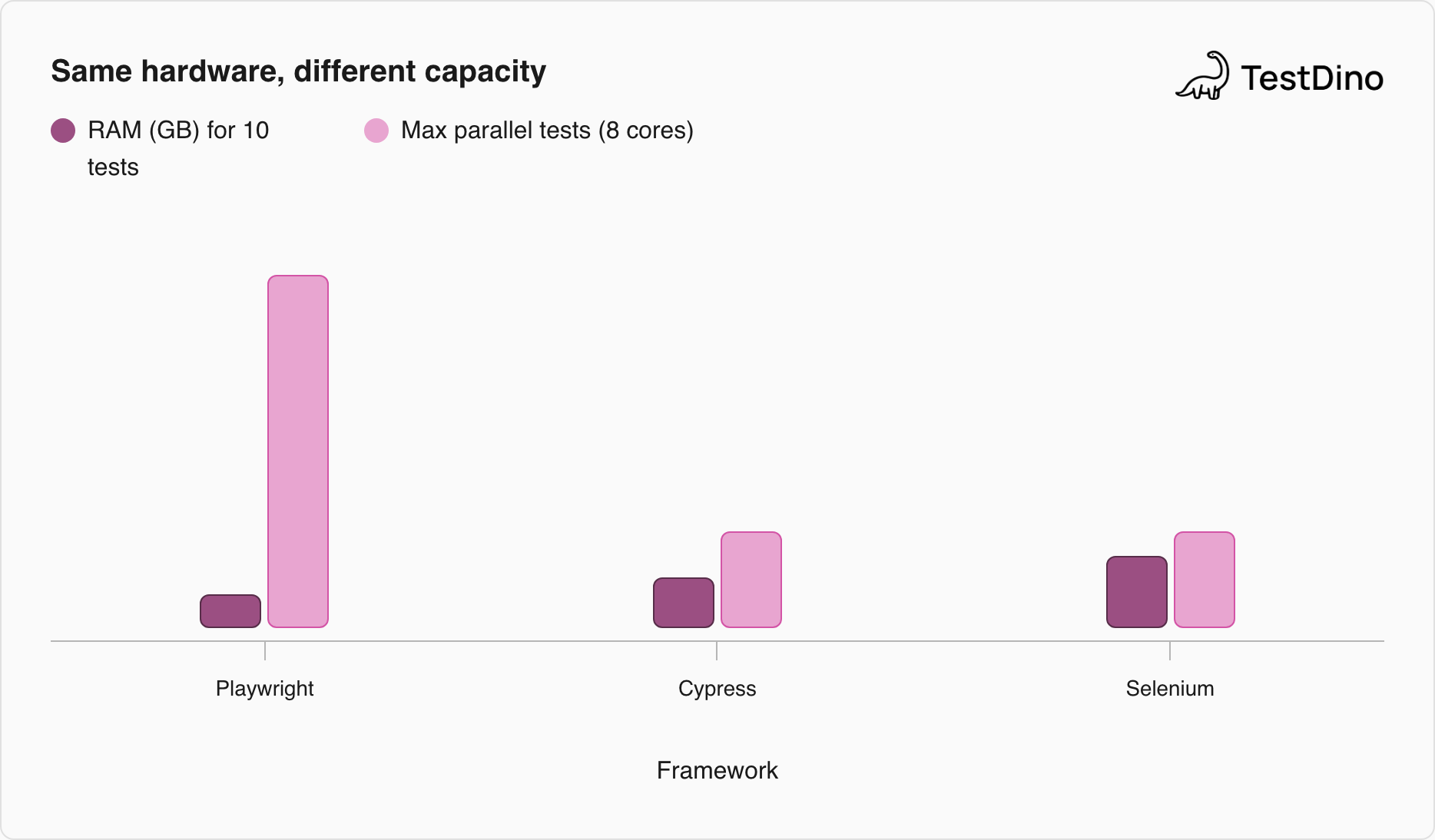

| Metric | Playwright | Cypress | Selenium |

|---|---|---|---|

| RAM for 10 parallel tests | ~2.1 GB | ~3.2 GB | ~4.5 GB |

| Parallel capacity per machine (8 cores) | 15–30 tests | 4–8 tests | 4–8 tests |

| Browser processes | 1 shared (multiple contexts) | 1 per suite | 1 per test |

| CPU profile | Moderate-low | Moderate | High (multiple processes) |

The memory gap comes from how each framework handles browser instances. Selenium spawns one full browser per test session. Cypress uses one browser per suite, but adds its own proxy and bundling overhead. Playwright shares a single browser process across multiple lightweight contexts.

A browser context in Playwright is an isolated session (like an incognito window) that shares the underlying browser process. It has its own cookies, storage, and permissions, but doesn't require launching a new browser. This is why Playwright runs 2-3x more parallel tests on the same hardware.

At scale, these differences compound. One study found that running 5,000 tests/day required:

-

Selenium: 32 runner-hours/day

-

Playwright: 16 runner-hours/day (50% fewer CI minutes)

In cloud CI pricing, that 50% reduction can translate into thousands of dollars in annual savings.

Benchmark 4: Cross-Browser Performance

Most benchmarks only test Chromium. But real-world suites run across browsers. The ray.run cross-browser analysis measured Playwright's performance across all supported engines:

| Browser | Headless (vs Chromium) | Headed (vs Chromium) |

|---|---|---|

| Chromium | Baseline (fastest) | Baseline |

| Microsoft Edge | +3.9% | +1.1% |

| WebKit | +13.2% | +59.6% |

| Google Chrome | +19.7% | +3.4% |

| Firefox | +34.2% | +70.8% |

-

Chromium is consistently fastest for Playwright tests

-

Firefox is the slowest, especially in headed mode on VMs (+70.8%)

-

Headed vs headless gap: ~15% locally, but nearly zero on VMs

-

WebKit performance varies wildly between local and VM execution

This matters for teams running cross-browser matrices. If your CI pipeline runs all three browsers, Firefox will be your bottleneck. Plan shard allocation accordingly.

Benchmark 5: Architecture and Why Speed Differs

The performance gaps aren't random. They're built into how each framework communicates with browsers.

| Metric | Playwright | Cypress | Selenium |

|---|---|---|---|

| Flakiness reduction vs baseline | 80–90% | 60–70% | Baseline (highest flakiness) |

| Auto-waiting built in | Yes (all actions) | Yes (commands) | No (requires explicit waits) |

| Execution time variability | Lowest | Low | Highest |

| CI retry frequency reduction | 35% fewer retries | Moderate | Baseline |

Playwright uses WebSocket connections directly to browser debugging protocols. Every action is a single message over a persistent connection. No HTTP overhead, no driver process in the middle.

Cypress runs tests inside the browser itself. Direct DOM access makes debugging excellent, but the trade-off is:

Startup overhead (~5-7 seconds to bundle, inject, and proxy)

-

Single-tab, single-origin execution limits

-

JavaScript/TypeScript only

Selenium communicates over HTTP to a separate WebDriver process. Each step involves a four-hop round-trip:

-

Test code sends HTTP request to WebDriver

-

WebDriver translates to browser command

-

Browser executes and returns response

-

WebDriver sends HTTP response back

Tip: Selenium 4.x introduced BiDi (bidirectional) protocol support to reduce this overhead. If you're benchmarking Selenium, make sure you're using Selenium 4+ with BiDi enabled. One independent analysis described Selenium as "the historical source of flakiness" due to its protocol overhead, while noting Playwright "combines the best of both" Cypress speed and Selenium flexibility.

Benchmark 6: Flakiness and Consistency

Speed means nothing if results aren't consistent. Teams spend 40-50% of E2E testing effort just on maintenance (fixing broken tests), regardless of framework.

| Capability | Playwright | Cypress | Selenium |

|---|---|---|---|

| Built-in parallelism | Yes, via workers + sharding | Via Cypress Cloud (paid) | Via Selenium Grid (self-hosted) |

| Configuration | --workers=N --shard=X/Y |

Requires Cloud subscription | Requires Grid infrastructure |

| Concurrent tests per machine | 15–30 (browser contexts) | 4–8 | 4–8 (full browser per test) |

| Resource model | Multiple contexts, one browser | One browser per suite | One browser per test |

| Cost | Free | Paid for parallelism | Free (infra cost) |

Playwright tests tend to be most stable because its high-speed protocol and built-in locator strategies produce the highest consistency in run times. Cypress improved reliability with built-in retries, but complex asynchronous apps can still cause occasional random failures. Selenium is historically the most brittle: user surveys consistently cite timing and asynchronous handling as top pain points.

This is where flaky test detection becomes a performance concern. A framework that runs 20% faster but generates 3x more retries ends up slower in total pipeline time.

Benchmark 7: Parallel Execution Throughput

Parallelization is the single biggest performance lever in CI. Here's how the three frameworks handle it:

| Capability | Playwright | Cypress | Selenium |

|---|---|---|---|

| Built-in parallelism | Yes, via workers + sharding | Via Cypress Cloud (paid) | Via Selenium Grid (self-hosted) |

| Configuration | --workers=N --shard=X/Y |

Requires Cloud subscription | Requires Grid infrastructure |

| Concurrent tests per machine | 15–30 (browser contexts) | 4–8 | 4–8 (full browser per test) |

| Resource model | Multiple contexts, one browser | One browser per suite | One browser per test |

| Cost | Free | Paid for parallelism | Free (infra cost) |

Playwright's browser context isolation is the key differentiator. Multiple contexts share a single browser process, meaning you can run 2-3x more tests simultaneously on the same hardware than with Selenium Grid.

One case study showed 60% faster CI runs on 8 machines after migrating from Selenium to Playwright, driven primarily by the advantage of parallelism.

Note: Cypress added limited free parallelism in recent versions, but production-scale parallel execution still requires Cypress Cloud. For teams running 100+ parallel tests, the infrastructure cost model matters as much as raw speed.

The Consolidated View

Here's everything in one table:

| Metric | Playwright | Cypress | Selenium |

|---|---|---|---|

| Per-action speed | 290ms (baseline) | 420ms (~1.45x) | 536ms (~1.85x) |

| Tests/hour throughput | ~1,240 | ~857 | ~670 |

| Startup latency | Minimal | ~5–7s overhead | Minimal |

| RAM (10 parallel tests) | ~2.1 GB | ~3.2 GB | ~4.5 GB |

| Parallel capacity (8 cores) | 15–30 tests | 4–8 tests | 4–8 tests |

| Flakiness reduction | 80–90% | 60–70% | Baseline |

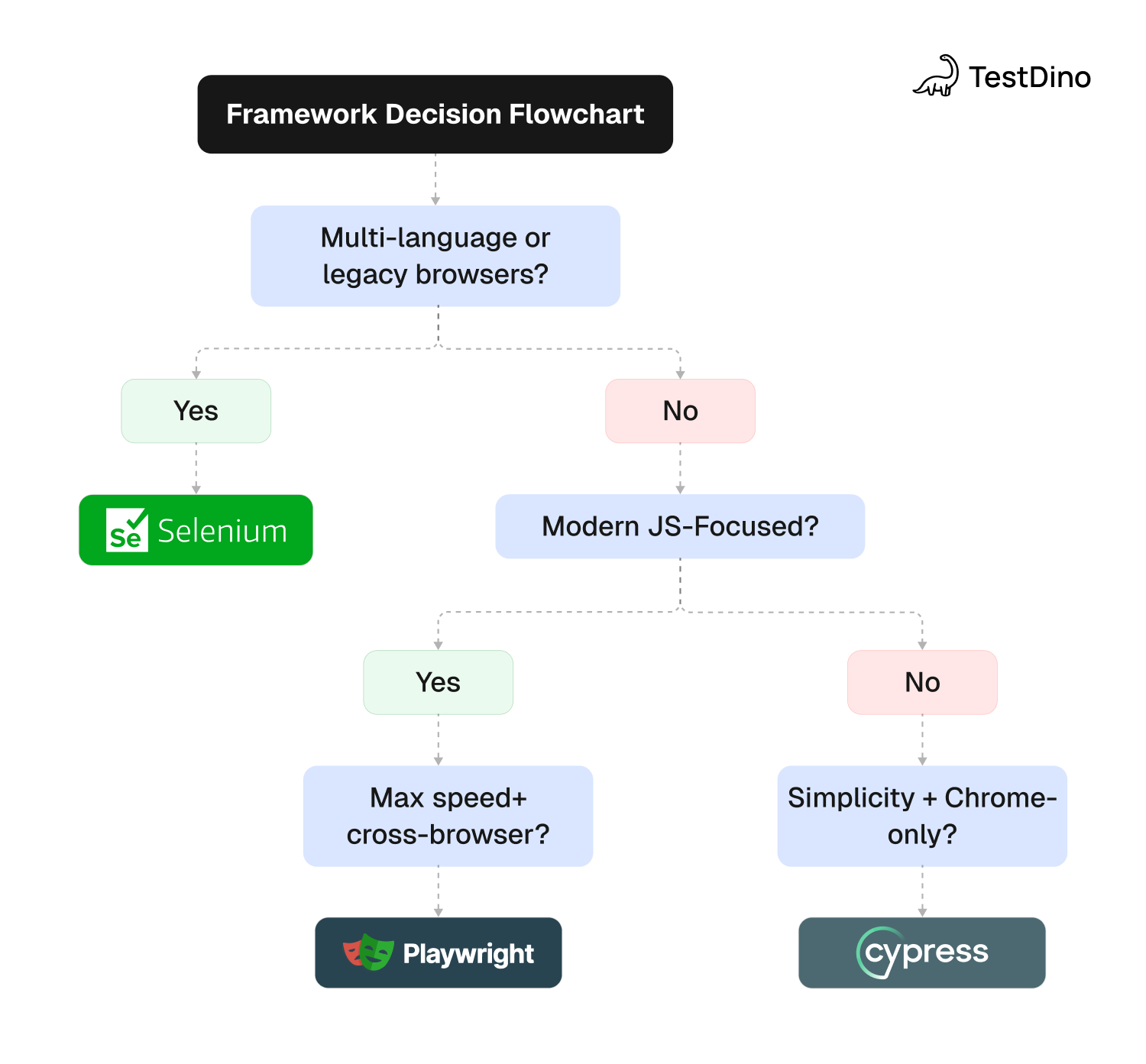

| Cross-browser | Chromium, Firefox, WebKit | Chromium + Firefox | All via WebDriver |

| Language support | JS/TS, Python, .NET, Java | JS/TS only | 7+ languages |

| npm downloads (Feb 2026) | ~26M | ~6.7M | ~2.1M |

| Parallel cost | Free | Paid (Cloud) | Free (Grid setup) |

Tip: Selenium's npm package only captures JavaScript usage. Its Java and Python ecosystems (Maven, PyPI) are massive and not reflected here. For the full adoption picture, see the Playwright market share analysis.

How TestDino Helps After You Choose a Framework

Choosing a framework based on benchmarks is step one.

Keeping your suite fast over months is a harder problem.

Test suites accumulate slow specs, flaky tests, and gradually worsening CI times. TestDino provides the monitoring layer that benchmarks can't:

| What You Need | TestDino Feature | What It Does |

|---|---|---|

| Find slow tests | Analytics | Duration trends per spec across every CI run. Spot tests getting slower before they timeout |

| Find flaky tests | Flaky Tests | Flags tests with inconsistent pass/fail patterns. Shows flakiness percentage per test case |

| Understand failures | AI Insights | Classifies every failure as Actual Bug, UI Change, Flaky Test, or Miscellaneous with confidence scores |

| Compare environments | Environment Mapping | Compare pass rates, flaky rates, and duration across CI environments |

| Monitor live runs | Real-Time Streaming | Watch tests execute live with shard-aware progress. Catch failures as they happen |

| Cut CI waste | CI Optimization | Rerun only failed tests via npx tdpw last-failed. Skip green tests entirely |

| Track coverage | Code Coverage | Statement, branch, function, and line metrics per run. Sharded coverage merging |

| Browse test history | Test Explorer | Search all test cases with execution history. Sort by duration, status, or tags |

For teams migrating from Selenium or Cypress to Playwright, TestDino's GitHub integration posts AI-generated test summaries directly to PRs and commits. The Trace Viewer integration lets you debug failures with Playwright's built-in trace files without leaving the TestDino dashboard.

What This Means for Your Team

-

Playwright is the fastest framework in every benchmark we reviewed. The advantage ranges from 1.45x faster than Cypress to 1.85x faster than Selenium per action. At scale, the gap holds or widens.

-

Resource efficiency is Playwright's hidden advantage. Using 50-60% less memory per test and supporting 2-3x more parallel tests on the same hardware means fewer CI runners and lower cloud costs.

-

Cypress's startup penalty is real but manageable. For test suites (not single tests), Cypress closes much of the speed gap. If your team values its debugging experience, the trade-off may be acceptable for smaller suites.

-

Selenium's speed disadvantage is architectural. The HTTP-based WebDriver protocol adds latency to every action. Selenium 4's BiDi protocol helps, but can't fully close the gap against WebSocket-native frameworks.

-

Consistency matters more than peak speed. Playwright's lowest execution time variability means fewer surprise timeouts and retries. This reduces total pipeline time more than raw speed alone.

-

Parallel execution is the bigger lever. The difference between 4 workers and 30 workers dwarfs the per-test speed difference between frameworks. Invest in parallelization before obsessing over framework switching.

FAQs

Table of content

Flaky tests killing your velocity?

TestDino auto-detects flakiness, categorizes root causes, tracks patterns over time.