Playwright Flaky Tests: How To Detect and Fix them

A Playwright test that passes locally but fails in CI often signals flakiness or environment sensitivity, and it's a frustrating problems This guide covers how to identify flaky tests using Playwright's retry mechanism and apply targeted fixes including auto-wait patterns, stable locators, and network mocking.

Flaky tests are one of the fastest ways to slow down a CI pipeline.

A test fails, you rerun the build, and it passes. Nothing changed. Over time this becomes routine: rerun, merge, move on. But those retries add up, developers lose trust in failures, and real bugs start hiding behind noise.f

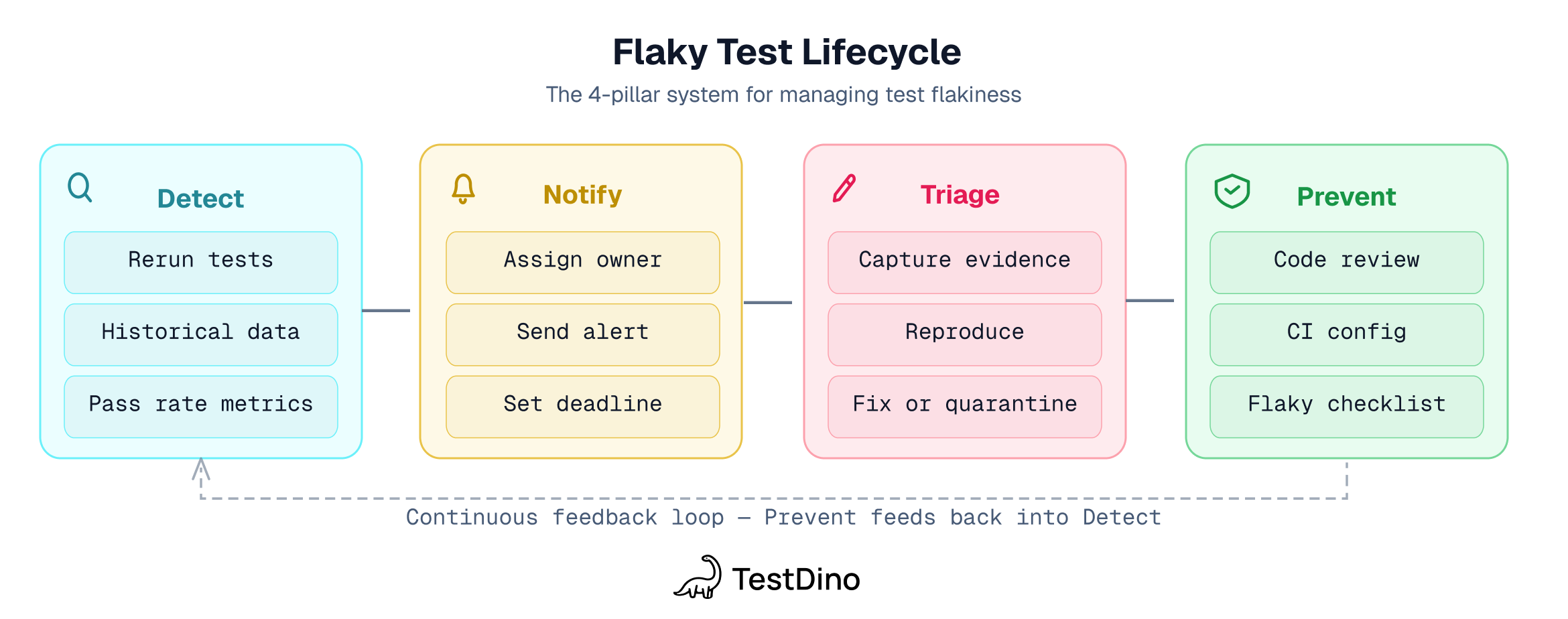

In Playwright, flaky tests usually come from timing issues, unstable selectors, shared state, or nondeterministic UI behavior. Most flakiness follows repeatable patterns you can detect early and fix for good. This guide walks through the Playwright-specific fixes, organized around the 6 root cause categories and the 4-pillar framework (Detect, Notify, Triage, Prevent).

What makes a Playwright test "flaky"?

A Playwright flaky test passes on one run and fails on the next without any code changes. Same test, same code, different result.

Playwright has a specific definition. When you enable retries, a test that fails on the first attempt but passes on retry gets labeled "flaky" in the report. Not "failed," specifically "flaky." That distinction matters:

- A failed test means something is broken

- A flaky test means something is unreliable

Google's internal data shows roughly 16% of their tests have some level of flakiness. Once your flaky rate crosses 5%, developers start treating red CI as background noise. That's the real cost. Not the CI minutes. The trust.

The numbers back this up:

-

An industrial case study (Leinen et al., ICST 2024) found a team of ~30 developers spent 2.5% of their productive time dealing with flaky tests, including 1.3% on repairs alone.

-

Slack's engineering team logged 553 hours in a single quarter triaging test failures.

-

Atlassian lost over 150,000 hours of developer time per year to flaky tests.

-

Microsoft's research found that ~26% of builds in large-scale CI systems are affected by flaky tests.

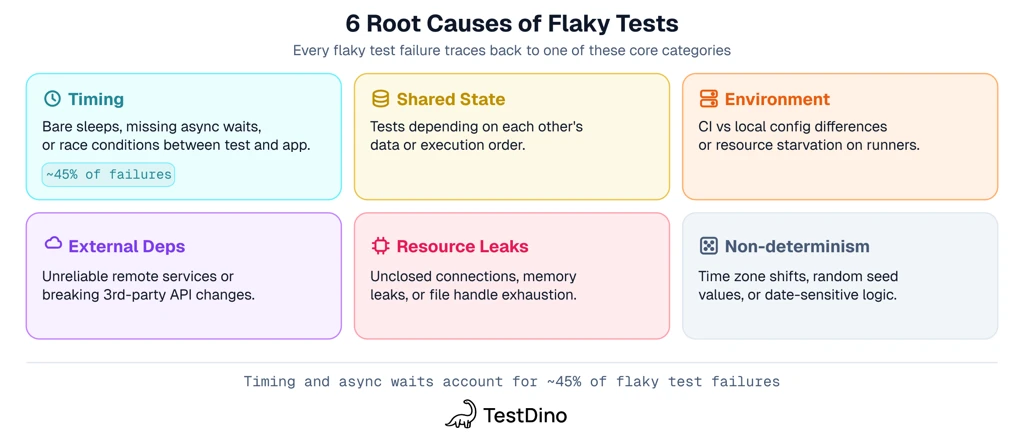

The 6 root causes of flaky Playwright tests

The general flaky tests guide covers 6 root cause categories that apply across all frameworks. Here's how each one shows up in Playwright specifically.

|

Root cause |

Playwright example |

|---|---|

|

Timing (async wait issues) |

waitForTimeout() instead of toBeVisible(), clicking before element is interactive, missing await on async calls |

|

Shared state (race conditions) |

Shared state between parallel workers, browser context conflicts, test order dependency |

|

Environment (platform differences) |

Passes on macOS, fails on Linux CI runner, Docker /dev/shm exhaustion, browser-specific rendering |

|

External dependencies (network) |

page.goto() timeout on slow backend, flaky third-party API, waitForResponse race condition |

|

Resource leaks |

Unclosed browser contexts, connection pools growing across tests, duration drift over the suite |

|

Non-determinism (time, randomness) |

Timezone-dependent assertions, Math.random() edge cases, date logic that breaks on weekends |

What these look like in your codebase

Timing

This is the big one. Playwright auto-waits on actions like click() and fill(), but it does NOT auto-wait on:

-

Custom DOM queries inside page.evaluate()

-

Assertions using raw expect() without Playwright matchers

-

locator.count() and locator.all(), which return snapshots, not auto-retrying results

-

ElementHandle methods (they execute immediately)

That's where most timing flakes hide. The fix is almost always replacing manual waits or snapshot methods with web-first assertions.

A subtler timing bug: missing await on async Playwright calls. When a click() or fill() isn't awaited, it races the rest of the test. This is common enough that Playwright's official best practices recommend enabling the @typescript-eslint/no-floating-promises ESLint rule.

Shared state and race conditions

Two tests create orders in the same database table. One asserts "1 order exists," but the other test's order is already there. Works with --workers=1, breaks in parallel. Playwright creates a fresh BrowserContext per test, which handles cookie and storage isolation automatically. But database records, server-side state, and file system artifacts aren't isolated by Playwright. That's your responsibility.

Animation timing

Playwright's actionability checks do wait for elements to be stable (same bounding box for two consecutive animation frames) and not obscured. But animation flakes still happen when the app isn't semantically ready even after the element becomes actionable, or when overlays appear after the check passes but before the action completes.

Resource-affected flaky tests: when CI infrastructure is the root cause

Here's something that surprised me when I first read the research. A study covering 52 projects found that 46.5% of flaky tests are RAFTs (Resource-Affected Flaky Tests), where the pass/fail outcome changes based on available CPU, memory, or I/O at runtime.

In Playwright, this shows up as tests passing on your M1 Pro but failing on a shared 2-core CI runner, inconsistent results across shards, or tests that only fail when running in parallel.

This cross-project research suggests a significant portion of flaky tests aren't test code problems at all, they're infrastructure problems. If your tests pass locally but fail in CI, check your runner resources before rewriting selectors.

You can reproduce this locally using CDP session throttling:

test.beforeEach(async ({ page }) => {

const context = page.context();

const cdpSession = await context.newCDPSession(page);

await cdpSession.send('Emulation.setCPUThrottlingRate', { rate: 4 });

});

Start with a rate of 4-6x and adjust based on your CI runner specs. If your tests start failing with throttling applied, you've likely found RAFTs. Nicolas Charpentier wrote about this technique, and I think it should be part of every Playwright team's debugging toolkit.

How to detect Playwright flaky tests

Detection is the first pillar of the 4-pillar framework. You can't fix what you can't see.

Built-in Playwright detection

Playwright gives you a few ways to surface flaky tests natively.

Enable retries in your config:

export default defineConfig({

retries: process.env.CI ? 2 : 0,

});

With retries on, Playwright tags any test that fails then passes on retry as "flaky" in the HTML report. You can filter by this label directly.

Stress test with --repeat-each:

# Run each test 10 times to surface instability

npx playwright test --repeat-each 10

# Full stress test: high parallelism + stop on first failure

npx playwright test --repeat-each 100 --workers 10 -x --fail-on-flaky-tests --retries=2

# Combine with workers=1 to rule out parallelism as the cause

npx playwright test --repeat-each 10 --workers 1

The --fail-on-flaky-tests flag is underused. It treats any test that needs a retry to pass as a failure, even if it eventually went green. Run this pre-merge to enforce test stability standards.

Tip: Disable retries when investigating. Set retries: 0 temporarily. Otherwise retries hide the flakiness you're trying to catch.

Tip: Use page.pause() to freeze execution mid-test and inspect the page interactively. Especially useful when a test passes locally but you can't figure out what's different in the failing run.

The gap in single-run detection

Each CI run exists in isolation. You can see what broke today. You can't see whether this test has been flaking for 6 weeks, whether it only fails on Ubuntu runners, or whether it started after a specific commit.

Answering these questions requires historical test data across runs. Single-run reports can't do that.

Historical detection with analytics tools

This is where flaky test detection tools come in.

|

Method |

What it tells you |

What it can't tell you |

|---|---|---|

|

Playwright built-in (retries + HTML report) |

Which tests flaked in this run |

Whether this is a one-time flake or a pattern |

|

--repeat-each stress testing |

Whether a test is unstable under load |

Whether it flakes in real CI conditions |

|

Analytics tools (TestDino, etc.) |

Stability trends, root cause classification, environment correlation |

Depends on having enough historical run data |

Here's a finding I keep coming back to: research on co-occurring flaky test failures found that 75% of flaky tests belong to failure clusters, meaning they tend to fail together and share underlying causes. When one test flakes, look at what else failed in the same run. Error grouping clusters similar failures automatically. Instead of investigating 100 individual failures, you fix 3 root causes.

Once you've identified flaky patterns across runs, TestDino's MCP server lets you query that data directly from your IDE. Connect it to Claude or Cursor, then ask "which tests flaked most this week" or "what changed before this test started failing." It pulls test run history, failure details, and artifacts from TestDino into your editor so you can triage flaky tests without switching between dashboards.

Playwright-specific fixes that eliminate flakiness

Each fix below maps to a root cause from the table above. These are Playwright API patterns, not general testing advice.

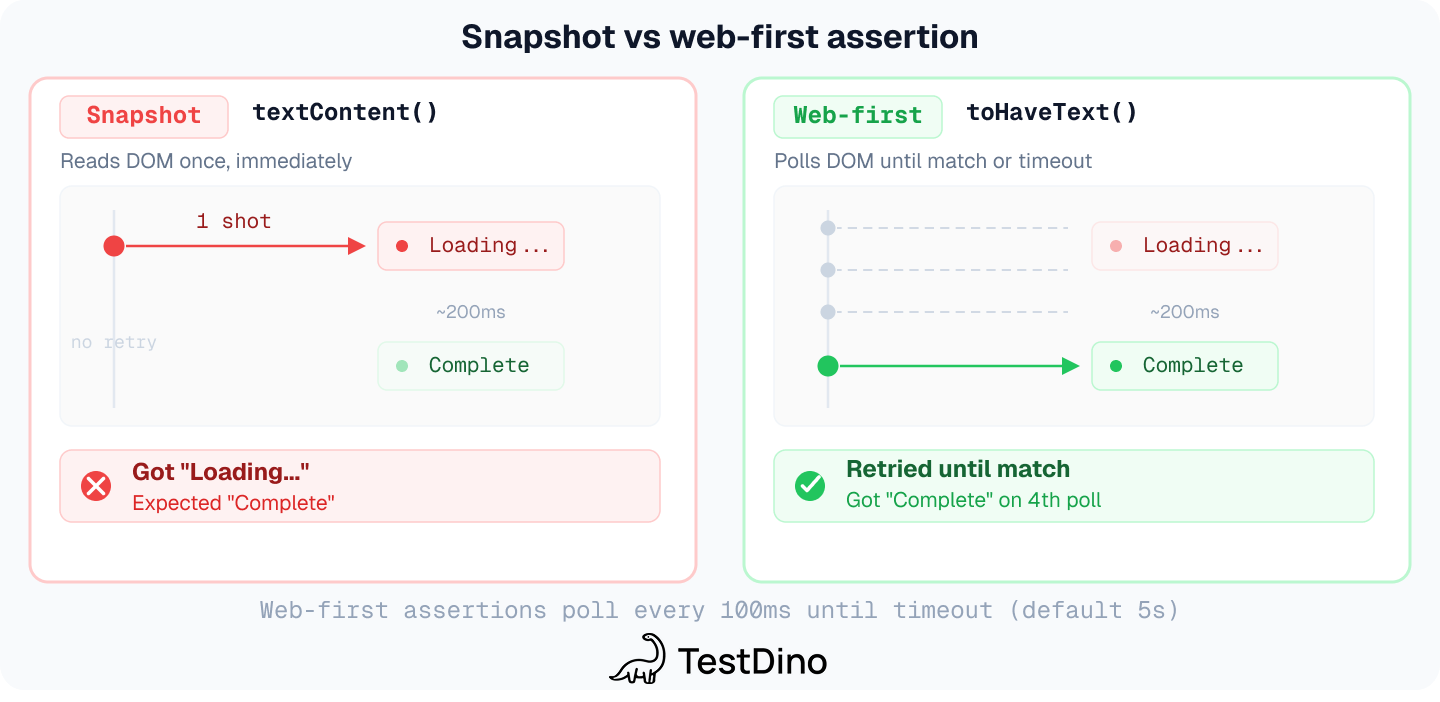

Replace arbitrary waits with web-first assertions

This is the single highest-impact fix you can make.

// This will flake

await page.waitForTimeout(2000);

await page.click('button#submit');

// This won't

await expect(page.getByRole('button', { name: 'Submit' })).toBeVisible();

await page.getByRole('button', { name: 'Submit' }).click();

Playwright's auto-waiting runs 5 checks before acting on any element: it resolves to exactly 1 element, is visible, is stable (not animating), receives events (not obscured), and is enabled.

Playwright has 26+ auto-retrying web-first assertions across locators and pages. The ones you'll use most:

|

Assertion |

What it checks |

|---|---|

|

toBeVisible() / toBeHidden() |

Element visibility |

|

toBeEnabled() / toBeDisabled() |

Interactive state |

|

toHaveText() / toContainText() |

Text content |

|

toHaveValue() |

Input value |

|

toHaveCount() |

Number of matching elements |

|

toHaveAttribute() |

HTML attribute |

|

toHaveURL() / toHaveTitle() |

Page-level checks |

If you're calling .textContent(), .getAttribute(), or .isVisible() and then asserting on the result with plain expect(), you're bypassing all of that.

// BAD: snapshot, no retry

const text = await page.locator('#status').textContent();expect(text).toBe('Complete');

// GOOD: auto-retries until text matches or timeout

await expect(page.locator('#status')).toHaveText('Complete');

Why this matters: textContent() is a one-shot snapshot. It grabs whatever text is in the DOM at that exact millisecond and returns it immediately. If the UI hasn't updated yet, the assertion fails, even if it would have been correct 100ms later. expect(locator).toHaveText() is a web-first assertion that polls the locator repeatedly until the condition is met or the timeout expires (default 5s). It naturally waits for async updates, animations, or data fetches to settle.

Rule of thumb: Always prefer Playwright's built-in web-first assertions (toHaveText, toBeVisible, toHaveValue, etc.) over manually extracting values and asserting on them. They're retry-aware by design, which eliminates an entire class of flaky tests.

Note: waitForTimeout() is sometimes acceptable for third-party UI components with CSS animations that Playwright can't introspect. But it doesn't fix the root cause. Romano et al. (ICSE 2021) found that fixing the await mechanism was the most common fix for animation-related flakes (38.5%), while adding delays accounted for only 15.4%. Prefer disabling animations via reducedMotion: 'reduce' in your config (covered below) or waiting for the animation to complete programmatically.

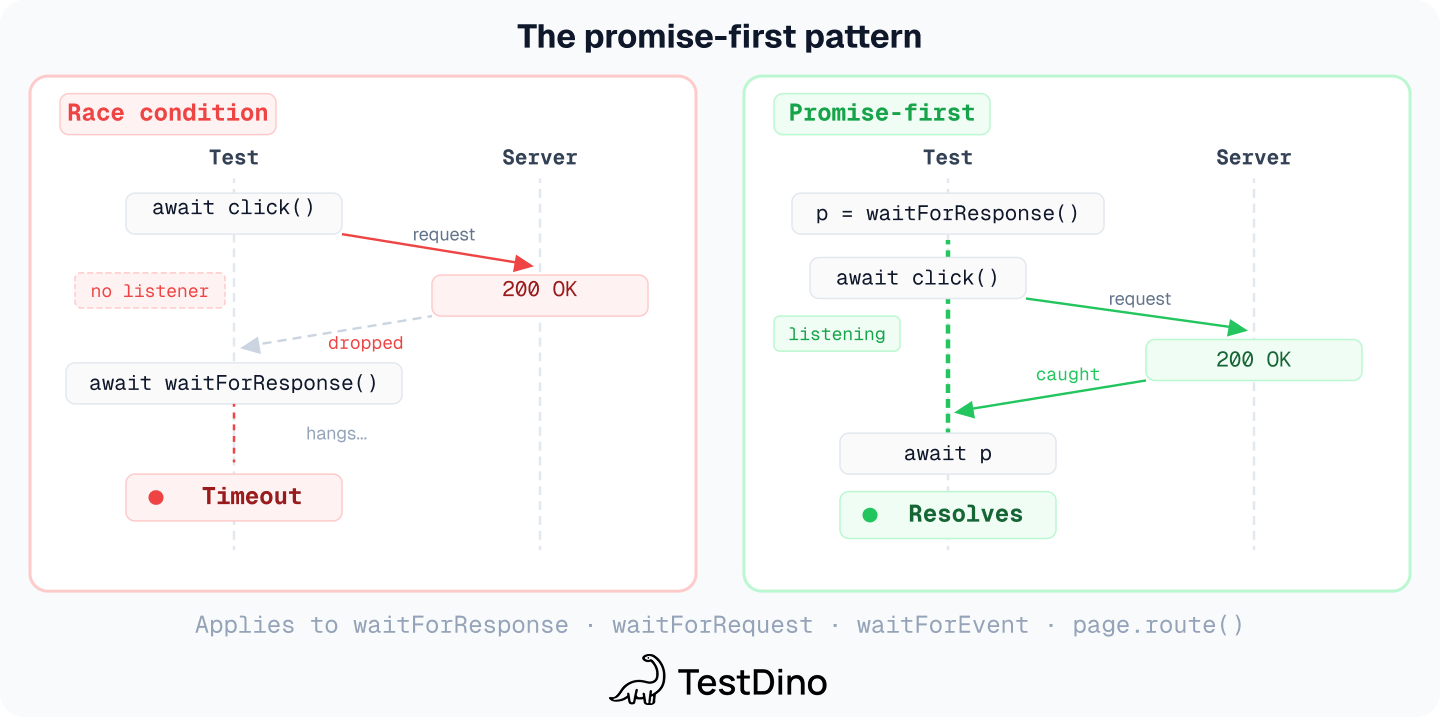

Fix race conditions with promise-first patterns

This is Playwright's most common race condition, and most teams hit it eventually.

// BAD: response might arrive before we start listening

await page.click('#submit');

const response = await page.waitForResponse('**/api/save');

// GOOD: start listening BEFORE triggering the action

const responsePromise = page.waitForResponse('**/api/save');

await page.click('#submit');

const response = await responsePromise;

If the response arrives before waitForResponse() is called, the promise never resolves and the test times out. The "promise-first, action-second" pattern applies to every event-based waitFor* method: waitForResponse, waitForRequest, and waitForEvent. (waitForURL is the exception, it checks persistent state, so it's safe to call after the action.)

The same principle applies to page.route(). Set up route handlers before the navigation that triggers requests:

// BAD: route might miss the request

await page.goto('/dashboard');

await page.route('**/api/data', route => route.fulfill({ body: '[]' }));

// GOOD: route set up before navigation

await page.route('**/api/data', route => route.fulfill({ body: '[]' }));

await page.goto('/dashboard');

Handle overlays, toasts, and animations

Playwright's actionability checks do verify that an element receives events (isn't obscured). But overlays still cause flakes when they appear unpredictably between steps, change the intended click target, or need to be dismissed as part of the app flow.

For predictable overlays, wait for them to disappear:

// Wait for overlay to disappear, then act

await page.locator('.loading-spinner').waitFor({ state: 'hidden' });

await page.click('#submit');

For unpredictable overlays (cookie banners, notification toasts), use page.addLocatorHandler() to dismiss them automatically whenever they appear:

await page.addLocatorHandler(

page.getByRole('button', { name: 'Accept cookies' }), async () => {

await page.getByRole('button', { name: 'Accept cookies' }).click();

}

);

For CSS animations, disable them globally in your config instead of fighting them test by test:

use: {

reducedMotion: 'reduce',

}

This is Playwright's built-in emulation option, it works across all browsers, not just Chromium. Most well-built apps already respect the prefers-reduced-motion media query. Add it to your config and most animation flakiness disappears.

Note: Iframes and Shadow DOM handling

Playwright pierces the Shadow DOM by default, so you rarely need special handling there. However, iframes remain a source of flakiness — specifically when a frame detaches or reloads mid-test. Always use page.frameLocator() to create a pointer to the frame. This ensures Playwright re-retrieves the frame if it becomes stale, rather than failing with a "frame detached" error.

Use stable locators

Fragile selectors break when CSS classes get renamed or the DOM structure shifts.

// Fragile - breaks when CSS changes

await page.locator('.btn-primary').click();

// Stable - tied to semantics

await page.getByRole('button', { name: 'Submit' }).click();

// Also stable

await page.getByTestId('submit-button').click();

await page.getByLabel('Email address').fill('[email protected]');

Tip: Locators and ElementHandle are different. ElementHandle (from page.$()) doesn't auto-wait and points to a specific DOM node at a specific moment. Locator is a lazy reference that retries automatically. Playwright's official docs explicitly discourage ElementHandle usage.

Respect Playwright's Strict Mode

By default, Playwright locators are strict. If a locator resolves to more than one element, Playwright throws a strict mode violation. This is a stability feature — it prevents you from interacting with the wrong element.

Avoid the temptation to "fix" this with .first(), .last(), or .nth(). While they stop the error, they introduce logic flakiness: if the order of elements changes in the UI, your test might pass by clicking the wrong button. Instead, use filtering by text or aria-roles to ensure your locator is unique.

Isolate every test

Test order dependency is a common source of flaky tests. It only shows up when test order changes, like when you enable parallel execution.

// Bad - depends on another test's data

test('view profile', async ({ page }) => {

await page.goto('/profile/user-created-by-other-test');

});

// Good - creates its own data

test('view profile', async ({ page, request }) => {

const response = await request.post('/api/users', {

data: { name: 'Test User' }

});

const user = await response.json();

await page.goto(`/profile/${user.id}`);

});

Every test creates its own state, uses it, and cleans up. Shared state plus parallelism equals race conditions. Check our reusable test patterns guide and the Playwright fixtures docs for fixture-based approaches.

Mock network dependencies

External APIs introduce variability: different response times, outages, rate limits. That's the 9% from the root cause table.

await page.route('**/api/users', (route) => {

route.fulfill({

status: 200,

contentType: 'application/json',

body: JSON.stringify({ users: [{ id: 1, name: 'Alice' }] }),

});

});

Playwright's network mocking API makes this straightforward. Mock third-party services (payment gateways, email, analytics). Don't mock critical end-to-end paths where you're validating actual integrations.

One gotcha: service workers can intercept requests before page.route() sees them, causing mock failures that look intermittent. Block them:

const context = await browser.newContext({

serviceWorkers: 'block',

});

Control time to eliminate non-determinism

Time-based flakiness is more common than most teams realize. Martin Fowler describes a classic example: a test queries "todos due in the next hour" and gets different results depending on when it runs. GitHub's engineering team encountered tests that assumed February has 28 days — passing for 3 years straight, then failing every leap year. And Kraken Technologies reports tests that broke at midnight boundaries and during DST transitions, because they compared timestamps across clock changes.

These failures share a root cause: the test depends on the system clock, which varies across environments and dates. Use Playwright's Clock API to freeze Date.now() and control setTimeout/setInterval directly instead of waiting in real time.

// Freeze time to a specific date to prevent "weekend" or "end-of-month" flakes

await page.clock.install({ time: new Date('2026-03-18T10:00:00') });

await page.goto('/dashboard');

await expect(page.getByText('Wednesday, March 18')).toBeVisible();

// Fast-forward time to trigger a 5-minute timeout without actually waiting

await page.clock.fastForward('05:00');

await expect(page.getByText('Session Expired')).toBeVisible();

This eliminates the variability of the system clock and the CI runner's speed, making time-sensitive assertions deterministic. Without clock control, these tests become what Michael Swart catalogs as the hardest category to diagnose — they pass locally, pass in CI most days, and only fail under specific calendar or timezone conditions.

Configure Playwright timeouts to prevent false failures

Playwright has multiple timeout settings, and you should configure each one separately:

export default defineConfig({

timeout: 60_000, // 60s per test

expect: {

timeout: 10_000, // 10s for assertions

},

use: {

actionTimeout: 15_000, // 15s for clicks, fills

navigationTimeout: 30_000, // 30s for page.goto()

},

retries: process.env.CI ? 2 : 0,

});

If page.goto() keeps timing out in CI, switch from the default load event to domcontentloaded. The load event waits for ALL resources (images, fonts, iframes), which is 2-5x slower in CI than locally:

await page.goto('/dashboard', { waitUntil: 'domcontentloaded' });

For tests you know are slow, use test.slow() to give them 3x the default timeout instead of raising the global value:

test('heavy data export', async ({ page }) => {

test.slow();

// this test now gets 3x the default timeout

});

Tune parallel execution

Too many workers on a CI runner causes resource starvation. That's the RAFT problem from earlier.

export default defineConfig({

workers: process.env.CI ? 2 : undefined,

fullyParallel: false, // file-level parallelism only

});

fullyParallel: true runs tests within the same file in parallel. This breaks any test that depends on execution order within a file. Start with file-level parallelism only.

When you're confident specific tests are independent, opt in per describe block instead of flipping the global switch:

test.describe('independent cart tests', () => {

test.describe.configure({ mode: 'parallel' });

test('add item', async ({ page }) => { /* ... */ });

test('remove item', async ({ page }) => { /* ... */ });

});

If tests pass with --workers=1 but fail with --workers=4, you've got either shared state or resource contention. The fix depends on which one. Use the diagnostic from the shared state section: repeat 100 times with 4 workers and look at the pattern.

How to prevent flaky Playwright tests

Fixing existing flakes is half the battle. The other half is stopping new ones from entering the codebase. This is the Prevent pillar of the 4-pillar framework.

Watch out for locator.all()

This is a common trap. locator.all() does NOT auto-wait. It returns whatever elements exist at that exact moment. If the list hasn't finished loading, you get a partial result. The official docs explicitly warn about this.

// This will flake if the list is still loading

for (const item of await page.getByRole('listitem').all()) {

await item.click();

}

// Safe: wait for the list to fully load first

await expect(page.getByRole('listitem')).toHaveCount(5, { timeout: 10_000 });

for (const item of await page.getByRole('listitem').all()) {

await item.click();

}

Use expect().toPass() for complex assertions

When you need to retry a block of multiple assertions together:

await expect(async () => {

const response = await page.request.get('/api/status');

expect(response.status()).toBe(200);

expect(await response.json()).toHaveProperty('ready', true);

}).toPass({ timeout: 30_000 });

Gotcha:Without an explicit timeout, expect.toPass() defaults to timeout 0 and does not use the custom expect timeout. Always pass a timeout.

Use expect.poll() for values that change over time

await expect.poll(async () => {

return await page.locator('.item').count();

}, { timeout: 10_000 }).toBeGreaterThan(5);

Catch missing await with ESLint

Async Playwright calls that aren't awaited race the rest of the test. Enable @typescript-eslint/no-floating-promises in your ESLint config:

{

"rules": {

"@typescript-eslint/no-floating-promises": "error"

}

}

This catches page.click() without await before it becomes an intermittent failure. Run it in CI alongside your tests.

Configure traces for CI debugging

Always enable trace recording in CI so you have evidence when tests fail:

export default defineConfig({

use: {

trace: 'on-first-retry',

screenshot: 'only-on-failure',

video: 'retain-on-failure',

},

});

trace: 'on-first-retry' only generates traces when a test needs a retry. Low storage cost, high debugging value.

Full GitHub Actions workflow for Playwright CI

# .github/workflows/e2e.yml

name: E2E Tests

on: [push, pull_request]

jobs:

test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-node@v4

with: { node-version: 20 }

- run: npm ci

- run: npx playwright install --with-deps

- run: npx playwright test --retries=2 --reporter=html

- uses: actions/upload-artifact@v4

if: always()

with:

name: playwright-report

path: playwright-report/

The if: always() on the upload step is important. Without it, you lose the report on failed runs, which is exactly when you need it most.

Think of Playwright as your fastest user. If a test exposes a timing issue, a real user can hit that same bug on a slow connection or old device. Sometimes the right fix isn't in the test at all. It's in the app.

CI and infrastructure fixes

These patterns address the environment and RAFT categories. They're not test code changes, they're infrastructure fixes.

Docker /dev/shm exhaustion

Chromium-based browsers use /dev/shm for shared memory. Docker defaults to 64MB, which causes browser crashes and intermittent failures. Playwright's Docker docs recommend --ipc=host:

# Recommended: share IPC namespace (Playwright's official recommendation)

docker run --ipc=host playwright-tests

# Alternative: increase /dev/shm

docker run --shm-size=1g playwright-tests

Run tests against production builds, not dev servers

Vite dev server and Next.js dev mode have HMR, hot-reload timing, and error overlays that interact badly with Playwright. These create flakes that don't exist in production.

webServer: {

command: 'npm run build && npx serve dist -p 5173',

url: 'http://127.0.0.1:5173',

timeout: 60_000,

reuseExistingServer: !process.env.CI,

},

Browser-specific flakiness

WebKit is supported on Linux CI, but some platform-dependent behavior can differ from Safari on macOS. If Safari fidelity matters, run WebKit on macOS. In CI, optimize for reproducibility: start with workers=1, shard across jobs if needed, and pin your Playwright version and Docker image instead of floating latest.

Lock down the test environment

Fix viewport, locale, timezone, and permissions in your config so you don't get environment-dependent behavior:

use: {

viewport: { width: 1280, height: 720 },

locale: 'en-US',

timezoneId: 'America/New_York',

},

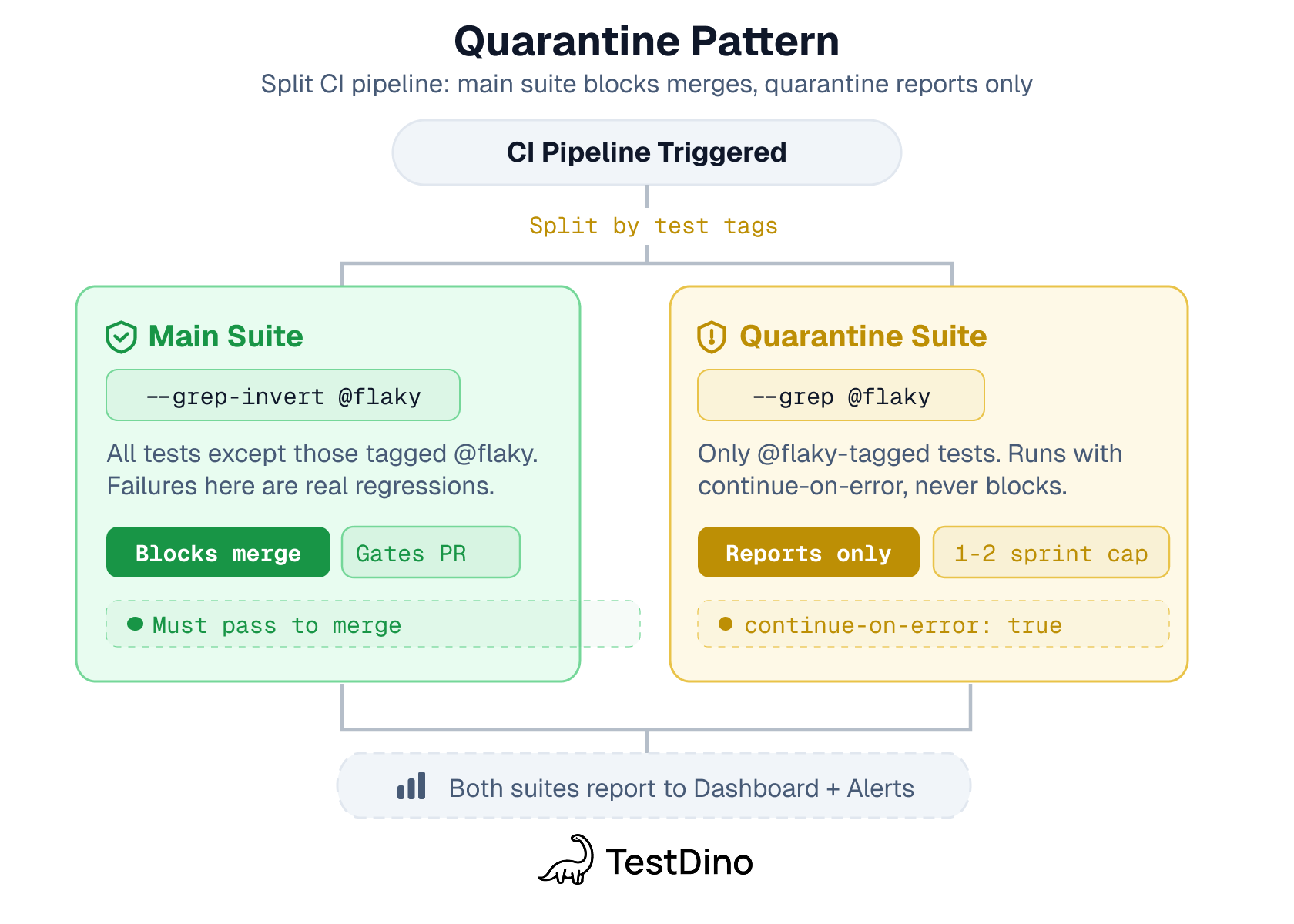

How to quarantine flaky tests in Playwright

I've seen teams with 40+ quarantined tests that nobody looked at for months. No ticket, no owner, no deadline. At that point, you don't have a test suite anymore. You have a test suite minus 40 tests.

Quarantine works, but only with accountability. This is the Triage pillar of the 4-pillar framework.

Tag it:

test('@flaky login flow under load', async ({ page }) => {

// test body

});

You can also use Playwright annotations for structured metadata. Note that test.fixme() skips the test entirely, use it for tests you want to stop running, not for quarantine where the test should still execute in a separate job.

Exclude from the main pipeline, run separately:

npx playwright test --grep-invert @flaky # main pipeline

npx playwright test --grep @flaky # separate non-blocking job

Create a ticket immediately. Not tomorrow. Assign a person, not "the team." If a quarantined test hasn't been fixed within 2 sprints, escalate it. Either fix it or delete it.

Team target (heuristic): aim for 95-98% pass rate with less than 2% flaky. These are operational targets, not universal rules.

How teams solved flaky tests at scale

Every company below used a different stack. The solutions were the same.

-

Slack built "Project Cornflake" to automate detection and suppression, dropping test job failure rate from 57% to under 4%.

-

Atlassian built "Flakinator" for the Jira Frontend repo, which auto-quarantines flaky tests, assigns an owner via code ownership, and creates a Jira ticket with a deadline.

-

GitHub went from 1 in 11 commits (9%) having a red build from a flaky test to 1 in 200 (0.5%), an 18x improvement.

-

Meta built the Probabilistic Flakiness Score (PFS), treating flakiness as a spectrum rather than a binary.

What you can do: Automate detection. Quarantine with ownership. Give every test a stability score and track it over time. Tools like TestDino do this for Playwright out of the box. Data-driven QA isn't about fancy tooling. It's about making the problem visible so more people fix it.

Tracking progress: the metrics that matter

You can't reduce playwright flaky tests by feel. Track these numbers:

|

Metric |

What it measures |

Target |

|---|---|---|

|

Flaky rate |

% of tests needing retries to pass |

Below 2% |

|

Failure rate |

Total failures including real bugs |

Below 5% |

|

MTTR |

Time from failure detection to fix deployed |

Minimize |

|

Duration trends |

Tests getting slower over time |

Stable or decreasing |

|

Environment correlation |

Failures tied to specific runners or environments |

Identify and fix |

Here's what progress looks like when you're actively fixing flaky tests:

|

Week |

Pass rate |

Flaky rate |

Avg suite duration |

|---|---|---|---|

|

1 |

87% |

15% |

4m 23s |

|

2 |

91% |

11% |

3m 58s |

|

3 |

94% |

7% |

3m 45s |

|

4 |

96% |

3% |

3m 30s |

The duration drop is the part people miss. As flaky tests get fixed, retries disappear, and your total suite time shrinks. That's the ROI number your engineering manager wants to see.

Test reporting tools that track these across runs make patterns obvious. Without them, you're guessing.

Code review checklist for Playwright tests

Before approving any test PR, check for these common flakiness sources:

-

Does it use waitForTimeout() or page.waitForSelector() instead of web-first assertions?

-

Does it use CSS or XPath selectors instead of role-based locators?

-

Does it depend on data created by another test?

-

Does it call external APIs without mocking them?

-

Does it use locator.all() without waiting for the list to fully load?

-

Does it use ElementHandle (via page.$()) instead of Locator?

-

Does it hardcode dates, random values, or timezone-sensitive logic?

-

Does it have tight timeouts that might fail on slower CI runners?

-

Is every async Playwright call properly awaited?

-

Does it set up waitForResponse or page.route() before the action that triggers the request?

This takes 2 minutes during review and catches most flaky tests before they hit the main branch.

Conclusion

If you're staring at a flaky suite and don't know where to start, here's your Monday morning plan: enable --fail-on-flaky-tests in your pre-merge pipeline. Audit your top 5 flakiest tests with trace viewer. Then set up cross-run tracking so you can see patterns instead of guessing.

The key takeaways:

- Fix async waits first. Research consistently shows timing issues are the leading cause of UI flaky tests. Replace waitForTimeout() with web-first assertions, this is often the highest-leverage fix.

- Check your infrastructure. 46.5% of flaky tests are resource-affected. If it passes locally but fails in CI, the code might be fine. The runner isn't.

- Use promise-first patterns. Set up waitForResponse and page.route() before the action that triggers the request. This is Playwright's most common race condition.

- Quarantine with accountability. Tag, exclude, ticket, assign, fix. In that order. Apply the 4-pillar framework (Detect, Notify, Triage, Prevent) as a system, not a one-time cleanup.

FAQs

Table of content

Flaky tests killing your velocity?

TestDino auto-detects flakiness, categorizes root causes, tracks patterns over time.