Write Playwright Tests with Cursor with Example

Learn how to use Cursor with Playwright MCP to generate tests, debug failures, and reduce flaky test issues in real projects.

Have you ever tried asking AI to write Playwright tests for you and ended up with the code that works perfectly fine in a demo but breaks on a real app?

If you write Playwright tests regularly, you already know the pain:

-

finding stable locators

-

covering real user flows

-

fixing tests after UI changes

-

chasing flaky CI failures

This guide shows how to write Playwright tests with Cursor and Playwright MCP in a way that actually works in real projects. You will set up Cursor, load Playwright skills, generate your first test, view reports with TestDino, and fix unstable tests using the Healer agent.

Why Cursor for Playwright tests?

Cursor is a VS Code-based editor with AI built into the workflow. It can read your codebase, connect to MCP servers, and switch between models from OpenAI, Anthropic, Google, and Cursor's own composer model.

For Playwright testing, this helps the AI generate tests that match your project structure and run properly in CI.

Most AI coding tools generate tutorial-level Playwright tests. They often use shallow/fragile CSS selectors, skip authentication flows, and ignore your project's fixtures or patterns.

Cursor improves this using three core capabilities:

- MCP support – connect Cursor to the Playwright MCP server so the AI can run a real browser, inspect the accessibility tree, and generate locators from the live page state.

- Playwright Skills – structured markdown guides that teach the AI proper Playwright patterns and best practices.

- .cursorrules – project-level rules that enforce locator strategy, test structure, and team conventions automatically.

Together, these give the AI real browser context, structured knowledge, and enforced rules. The result is Playwright tests that match your codebase from the first generation instead of needing multiple manual fixes.

Prerequisites

Before starting, make sure you have these in place:

-

Node.js 18 or newer is installed and available on your command line

-

Playwright installed in your project (npm init playwright@latest if starting fresh)

-

Cursor IDE downloaded and running (any paid plan works, free tier has limited agent requests)

-

Playwright browsers installed (npx playwright install --with-deps)

-

A working Playwright project with at least one passing test, so the AI has a reference spec to learn from

If you are starting from zero, run npm init playwright@latest first and get one basic test passing before adding AI to the workflow.

Connect Playwright MCP to Cursor

Playwright MCP is a Model Context Protocol server that connects AI agents to the Playwright test runner. It lets Cursor execute, inspect, and debug real browser sessions through Playwright instead of only generating test code.

There are 2 ways to set it up.

-

Quick setup: For the fastest setup in Cursor, use the quick setup option. Just click "Add Playwright MCP to Cursor" link. That's it. Done in seconds. This auto-configures everything in seconds.

- Manual setup: If you want more control, then follow these steps.

Install Playwright MCP as a dev dependency:

npm install --save-dev @playwright/mcp

The --save-dev flag adds it as a development dependency since it is only needed during local testing, not in production builds.

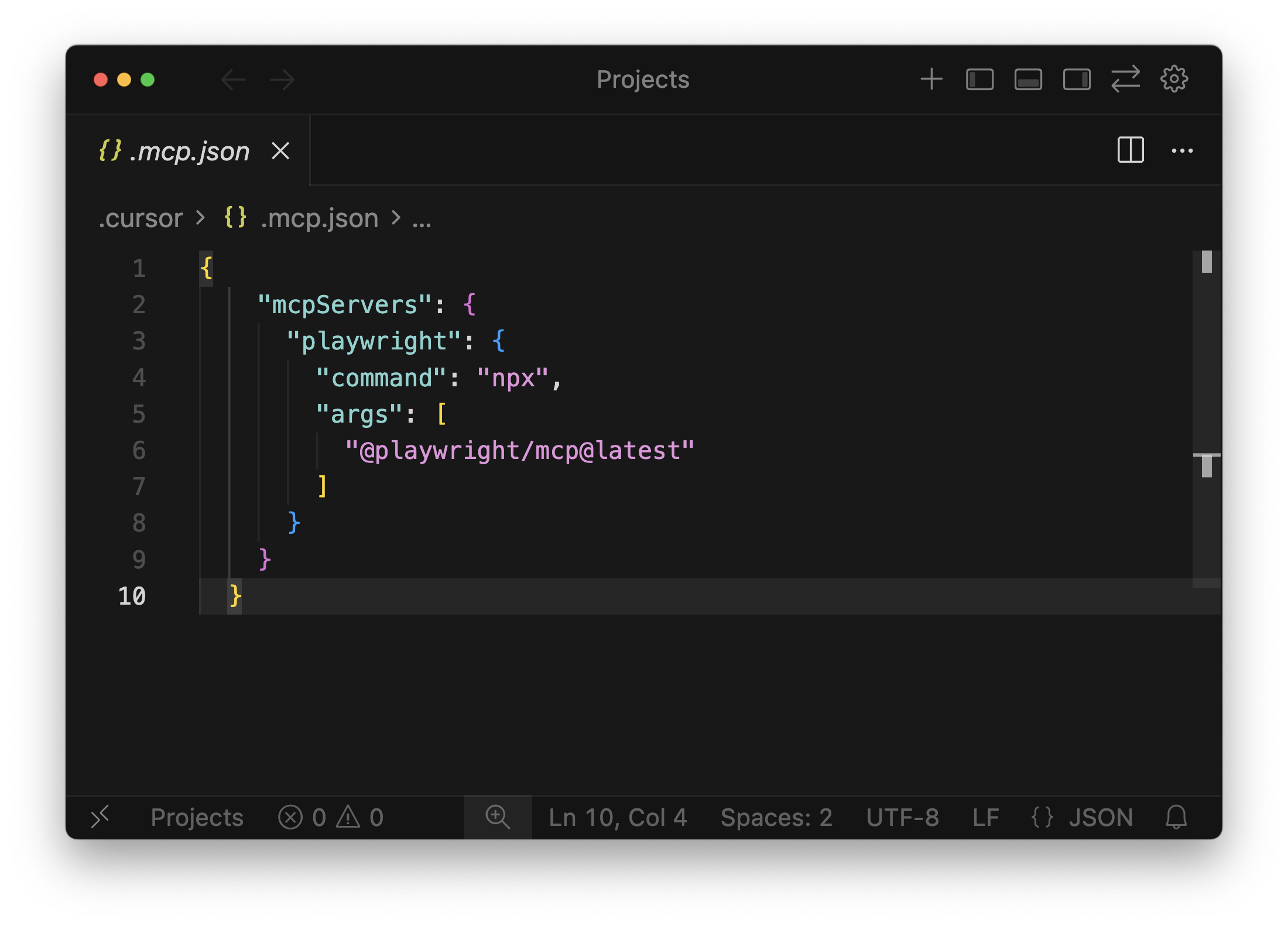

Next, open Cursor and go to Settings > MCP (Model Context Protocol). Add Playwright MCP as a local MCP server. Your project-level .cursor/mcp.json should look like this:

{

"mcpServers": {

"playwright": {

"command": "npx",

"args": ["@playwright/mcp@latest"]

}

}

}

Restart Cursor to apply the configuration.

If you are still facing the issue with the installation, you can check out this YouTube video

Verify it works: Ask Cursor to open a real browser session, navigate to any URL, and take a screenshot. If the browser launches and Cursor returns a screenshot, the connection is live.

Note: One thing to keep in mind: Playwright MCP is designed for interactive development and debugging. If you are running large regression suites or performance-sensitive test runs, MCP adds unnecessary overhead. Its strength is in test creation and debugging, not bulk execution.

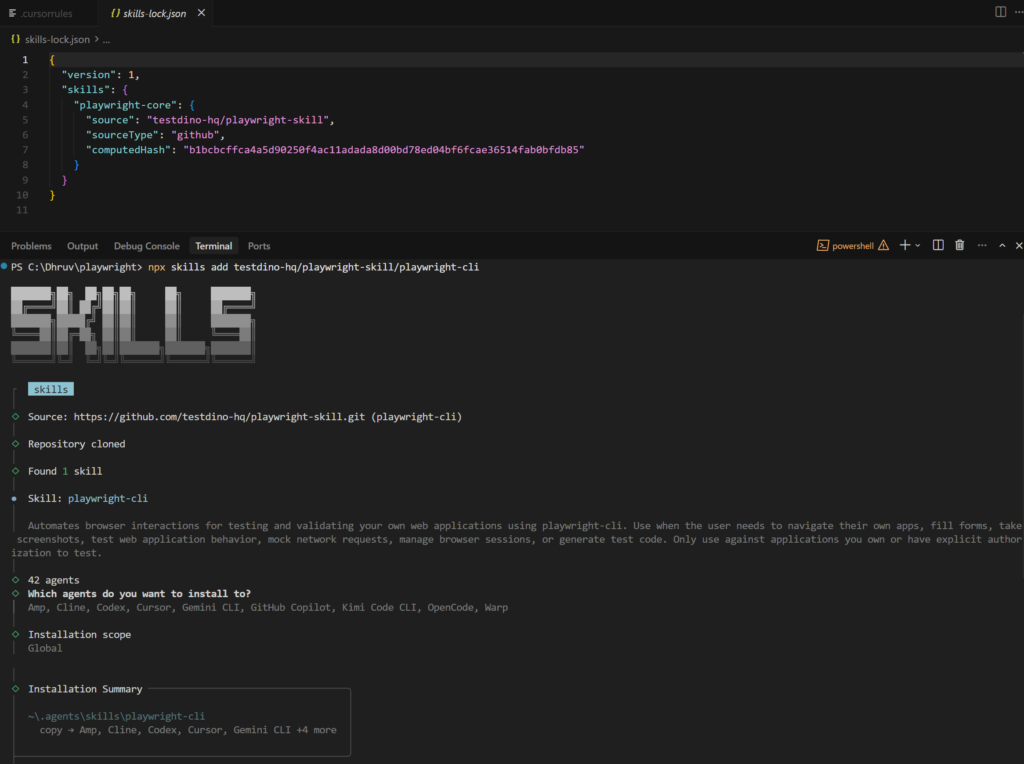

Use Playwright CLI for batch test generation

Playwright MCP works well for interactive sessions, but it sends the full accessibility tree and console output on every response. That burns through tokens fast. For longer sessions where you are generating multiple tests, the Playwright CLI is more token-efficient.

npm install -g @playwright/cli@latest

playwright-cli install

npx skills add testdino-hq/playwright-skill/playwright-cli

Load Playwright Skills for structure

AI agents write decent Playwright tests out of the box,

but they fall apart on real-world sites. Wrong selectors, broken auth, flaky CI runs. The root cause is that agents do not have context about your app or about Playwright best practices beyond their training data.

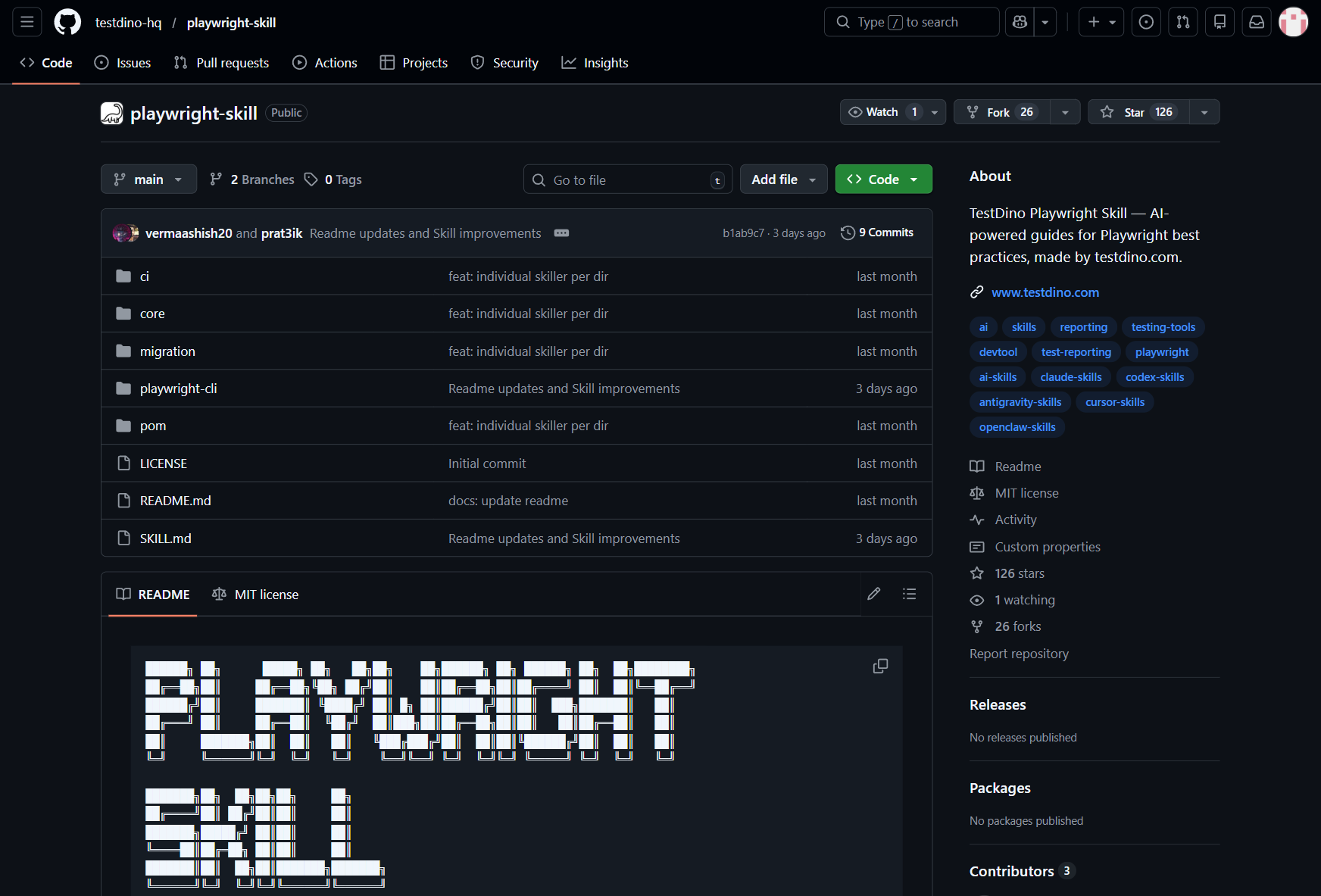

Playwright Skills fix this. A Skill is a curated collection of markdown guides that teach AI coding agents how to write production-grade Playwright tests. The Playwright Skill repository maintained by TestDino contains 70+ guides organized into five packs:

-

core/ -- 46 guides covering locators, assertions, waits, auth, fixtures, and more

-

playwright-cli/ -- 11 guides for CLI browser automation

-

pom/ -- 2 guides for Page Object Model patterns

-

ci/ -- 9 guides covering GitHub Actions, GitLab CI, and parallel execution

-

migration/ -- 2 guides for moving from Cypress or Selenium

Install them with a single command:

# Install all 70+ guides

npx skills add testdino-hq/playwright-skill

# Or install individual packs

npx skills add testdino-hq/playwright-skill/core

npx skills add testdino-hq/playwright-skill/ci

npx skills add testdino-hq/playwright-skill/playwright-cli

The difference is noticeable. Without the Skill loaded, an AI agent generates tutorial-quality code with brittle CSS selectors. With the Skill, it uses getByRole() locators, proper wait strategies, and structured test patterns that actually pass against real sites.

The repo is MIT licensed. Fork it and add your team's naming conventions, remove guides for frameworks you do not use, or add new guides for your internal tools. The structure stays the same, and your AI agent keeps working.

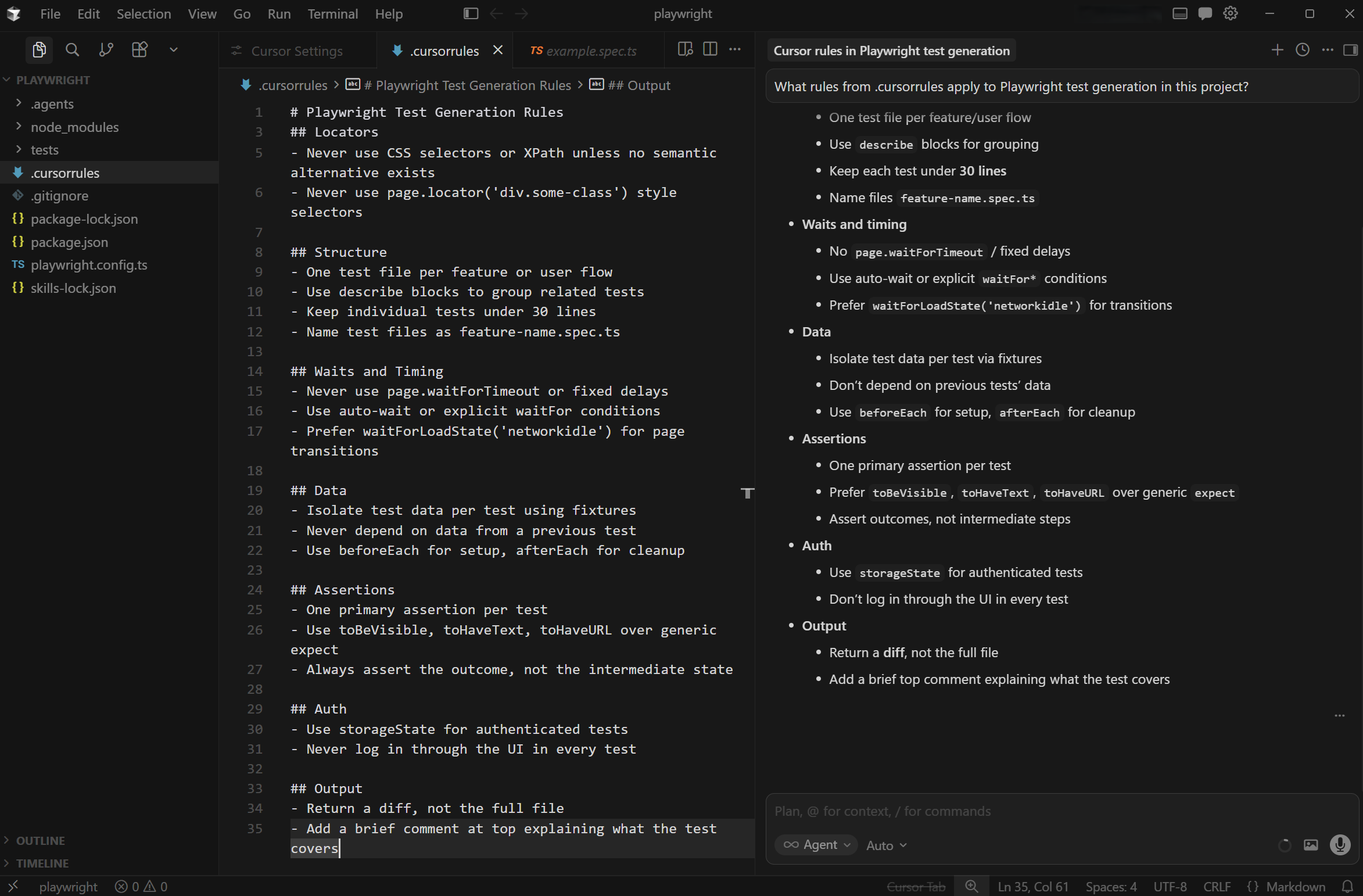

Write .cursorrules for your team

Skills give the agent general Playwright knowledge. .cursorrules give it your team's specific conventions. Without rules, Cursor will invent its own structure, and every generated test will look different.

Create a .cursorrules file in your project root. Here is a practical starting point for Playwright projects:

# Playwright Test Generation Rules

## Locators

- Always prefer getByRole, getByTestId, and getByLabel

- Never use CSS selectors or XPath unless no semantic alternative exists

- Never use page.locator('div.some-class') style selectors

## Structure

- One test file per feature or user flow

- Use describe blocks to group related tests

- Keep individual tests under 30 lines

- Name test files as feature-name.spec.ts

## Waits and Timing

- Never use page.waitForTimeout or fixed delays

- Use auto-wait or explicit waitFor conditions

- Prefer waitForLoadState('networkidle') for page transitions

## Data

- Isolate test data per test using fixtures

- Never depend on data from a previous test

- Use beforeEach for setup, afterEach for cleanup

## Assertions

- One primary assertion per test

- Use toBeVisible, toHaveText, toHaveURL over generic expect

- Always assert the outcome, not the intermediate state

## Auth

- Use storageState for authenticated tests

- Never log in through the UI in every test

## Output

- Return a diff, not the full file

- Add a brief comment at top explaining what the test covers

This file is loaded automatically by Cursor for every AI interaction in the project. When you ask Cursor to generate a test, it follows these rules without you repeating them in every prompt.

Share this file across your team through version control. Everyone gets the same AI behavior, which means consistent test output across developers.

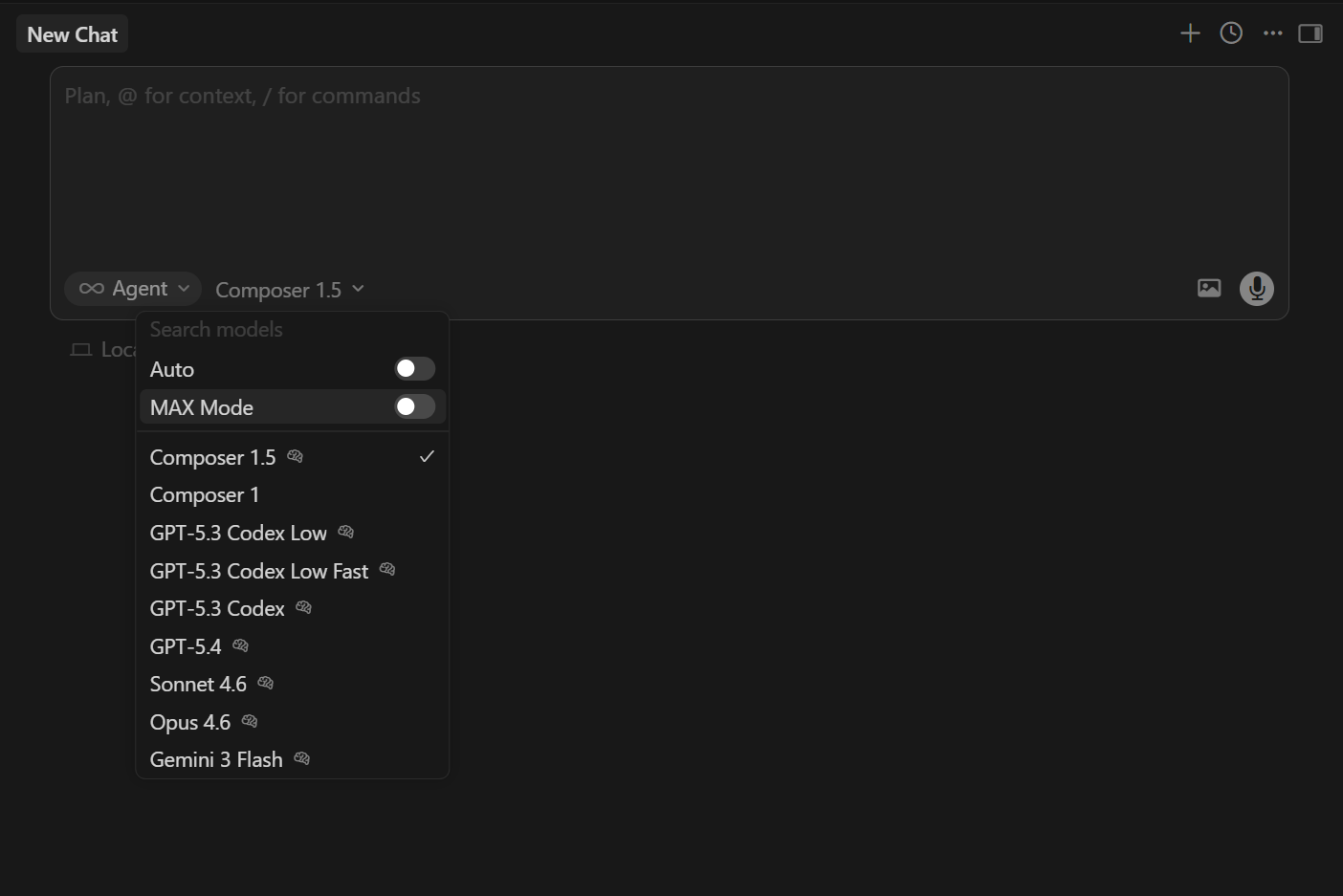

Pick the right model for test generation

Cursor lets you switch between models per conversation. This is useful because different models excel at different parts of the test generation workflow.

Composer 1.5 vs Claude Sonnet 4.6 vs GPT-5.4

Composer 1.5 is Cursor's own model, optimized for speed inside the editor. Generations feel near-instant, which makes it ideal for rapid iteration. It handles simple test generation and inline edits well. For straightforward specs where you have clear context and rules, Composer gets the job done fast.

Claude Sonnet 4.6 is the best value option for test generation. It scores 79.6% on SWE-bench at $3/$15 per million tokens, which is within striking distance of frontier models at a fraction of the cost. Sonnet handles multi-file context well and follows .cursorrules reliably. It is the default pick for most Playwright test generation work.

GPT-5.4 (or GPT-5.2 Codex, depending on Cursor's current model list) is fast at terminal execution and code review. It tends to be more concise in its output. Use it when you want quick scaffolding or when reviewing generated diffs.

Quick comparison table (speed, accuracy, cost)

| Model | Speed | Test Accuracy | Cost per 1M Tokens (in/out) | Best For |

|---|---|---|---|---|

| Composer 1.5 | Very fast | Good | Included in plan | Rapid iteration, simple tests |

| Claude Sonnet 4.6 | Moderate | High | $3 / $15 | Multi-file test generation, complex flows |

| GPT-5.2 Codex | Fast | Good | $6 / $30 | Quick scaffolding, code review |

| Claude Opus 4.6 | Slower | Highest | $5 / $25 | Complex refactors, large suites |

Accuracy here means how often the generated test runs without manual edits on first try, given proper context (MCP, skills, and rules).

How to switch models per conversation

Click the model selector dropdown in the Composer toolbar or the Chat panel. Select the model you want for the current conversation. Each conversation can use a different model. You can also switch mid-conversation if the current model is not producing good results.

A practical pattern: use Composer for generating individual test specs, switch to Claude Sonnet for multi-file refactors like moving to page objects or rebuilding fixtures, and use Opus only when dealing with complex test infrastructure changes that span many files.

Generate your first test

With MCP connected, CLI also setup, skills loaded, rules in place, and a model selected, you are ready to generate a test.

Start with a simple prompt so Cursor focuses on the flow and uses your project context.

There are primarily 2 ways you can create test cases:

-

Using MCP

-

Using CLI

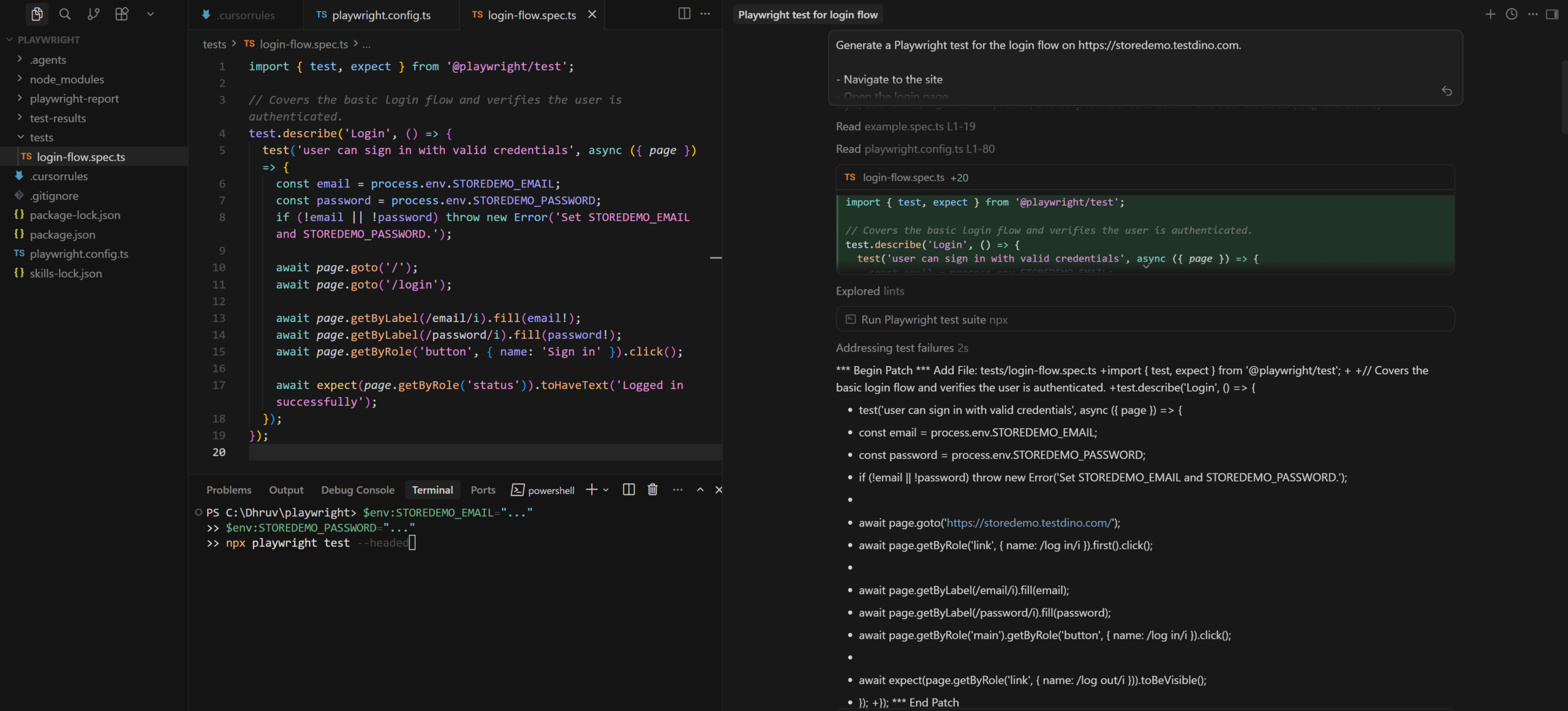

Example-1: Generate test using Playwright MCP

Here is a prompt that works well with the setup from the previous steps:

Generate a Playwright test for the login flow on https://storedemo.testdino.com.

- Navigate to the site

- Open the login page

- Sign in with valid credentials

- Verify the user is logged in

Use getByRole or getByTestId locators.

Use Playwright MCP

This works because the flow is clear and Cursor applies your .cursorrules, Skills, and existing project structure automatically.

What Cursor generates

With the setup done correctly, you get a clean test that follows Playwright best practices.

import { test, expect } from '@playwright/test';

// Covers the basic login flow and verifies the user is authenticated.

test.describe('Login', () => {

test('user can sign in with valid credentials', async ({ page }) => {

const email = process.env.STOREDEMO_EMAIL;

const password = process.env.STOREDEMO_PASSWORD;

if (!email || !password) throw new Error('Set STOREDEMO_EMAIL and STOREDEMO_PASSWORD.');

await page.goto('/');

await page.goto('/login');

await page.getByLabel(/email/i).fill(email!);

await page.getByLabel(/password/i).fill(password!);

await page.getByRole('button', { name: 'Sign in' }).click();

await expect(page.getByRole('status')).toHaveText('Logged in successfully');

});

});

Notice how it uses semantic locators like getByLabel and getByRole, avoids fixed waits, and keeps the test focused on the final outcome.

Playwright config

import { defineConfig, devices } from '@playwright/test';

export default defineConfig({

testDir: './tests',

fullyParallel: true,

forbidOnly: !!process.env.CI,

retries: process.env.CI ? 2 : 0,

workers: process.env.CI ? 1 : undefined,

reporter: 'html',

use: {

baseURL: 'https://storedemo.testdino.com',

trace: 'on-first-retry',

},

projects: [

{

name: 'chromium',

use: { ...devices['Desktop Chrome'] },

},

],

});

This sets the base URL, enables traces on retry, and loads environment variables.

Set test credentials

Your test uses environment variables for login. You can set them in two ways.

Option 1: Terminal (for quick run)

$env:STOREDEMO_EMAIL="your-email"

$env:STOREDEMO_PASSWORD="your-password"

npx playwright test --headed

Use this for quick local runs.

Option 2: .env file (recommended)

STOREDEMO_EMAIL=your-email

STOREDEMO_PASSWORD=your-password

This keeps credentials consistent across runs and works well in CI.

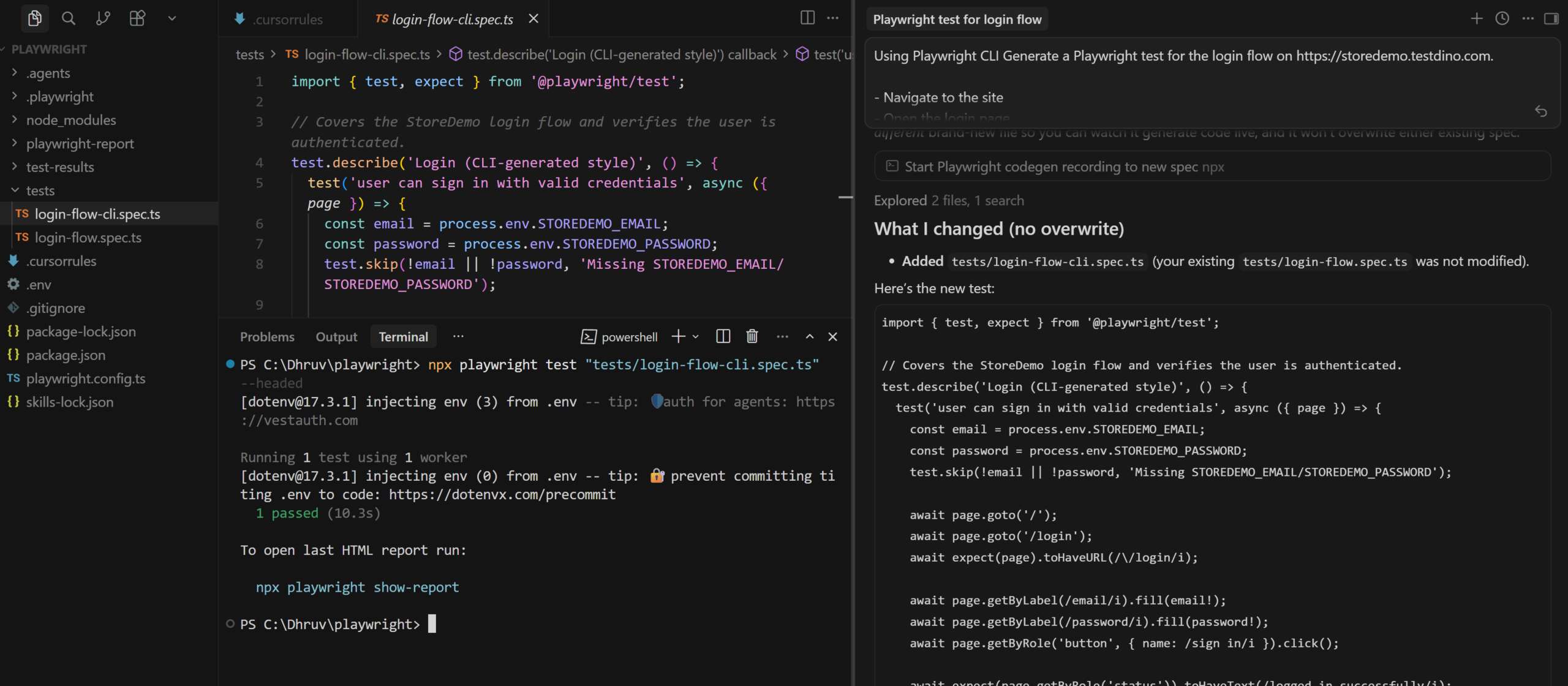

Example-2: Generate tests using Playwright CLI (token efficient way to generate tests)

Pre-requisite: Ensure you have installed CLI in your current directory using the steps from Playwright CLI for batch test generation section

Here is a prompt that works well with the setup from the previous steps:

Ensure you are following

Using Playwright CLI Generate a Playwright test for the login flow on https://storedemo.testdino.com.

- Navigate to the site

- Open the login page

- Sign in with valid credentials

- Verify the user is logged in

Use getByRole or getByTestId locators.

This works because the flow is clear and Cursor applies your .cursorrules, Skills, and existing project structure automatically.

What to check before committing

Before merging AI-generated tests, run through this quick checklist:

-

Run the test locally with npx playwright test tests/auth/login-flow.spec.ts --headed to verify it passes

-

Check locators -- are they semantic (getByRole, getByTestId) or fragile (CSS class names)?

-

Check for hardcoded data -- test data should come from fixtures, not inline strings

-

Check assertions -- does the test assert the actual outcome, or just that "something loaded"?

-

Check independence -- can this test run in isolation without depending on other tests?

-

Run in CI with trace enabled so failures come with evidence: npx playwright test --trace on

Treat AI-generated tests as a strong first draft. Review them the same way you would review code from a junior developer who writes fast but sometimes misses edge cases.

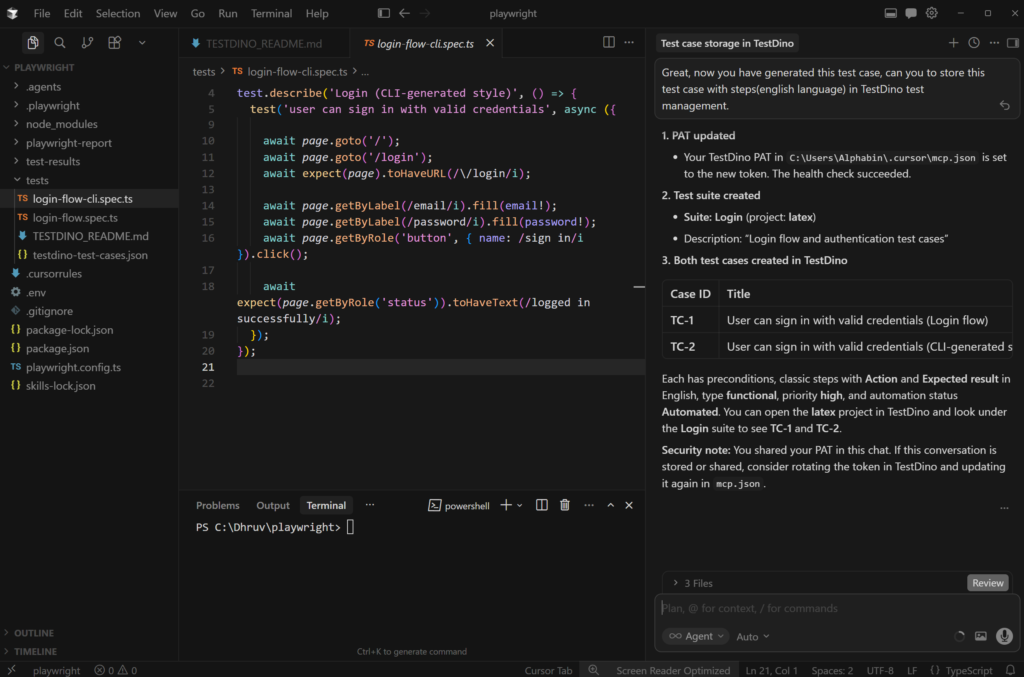

Store tests and run CI reports with TestDino

Generating tests is only half the workflow. Once your suite grows past a handful of specs, you need centralized reporting, failure tracking, and visibility into what broke and why. This is where TestDino fits in.

TestDino is a Playwright-focused test intelligence platform which provides reporting and analytics capabilities that consume standard Playwright test output. It provides centralized dashboards, flaky test tracking, and GitHub PR integration. No custom framework or code refactoring required.

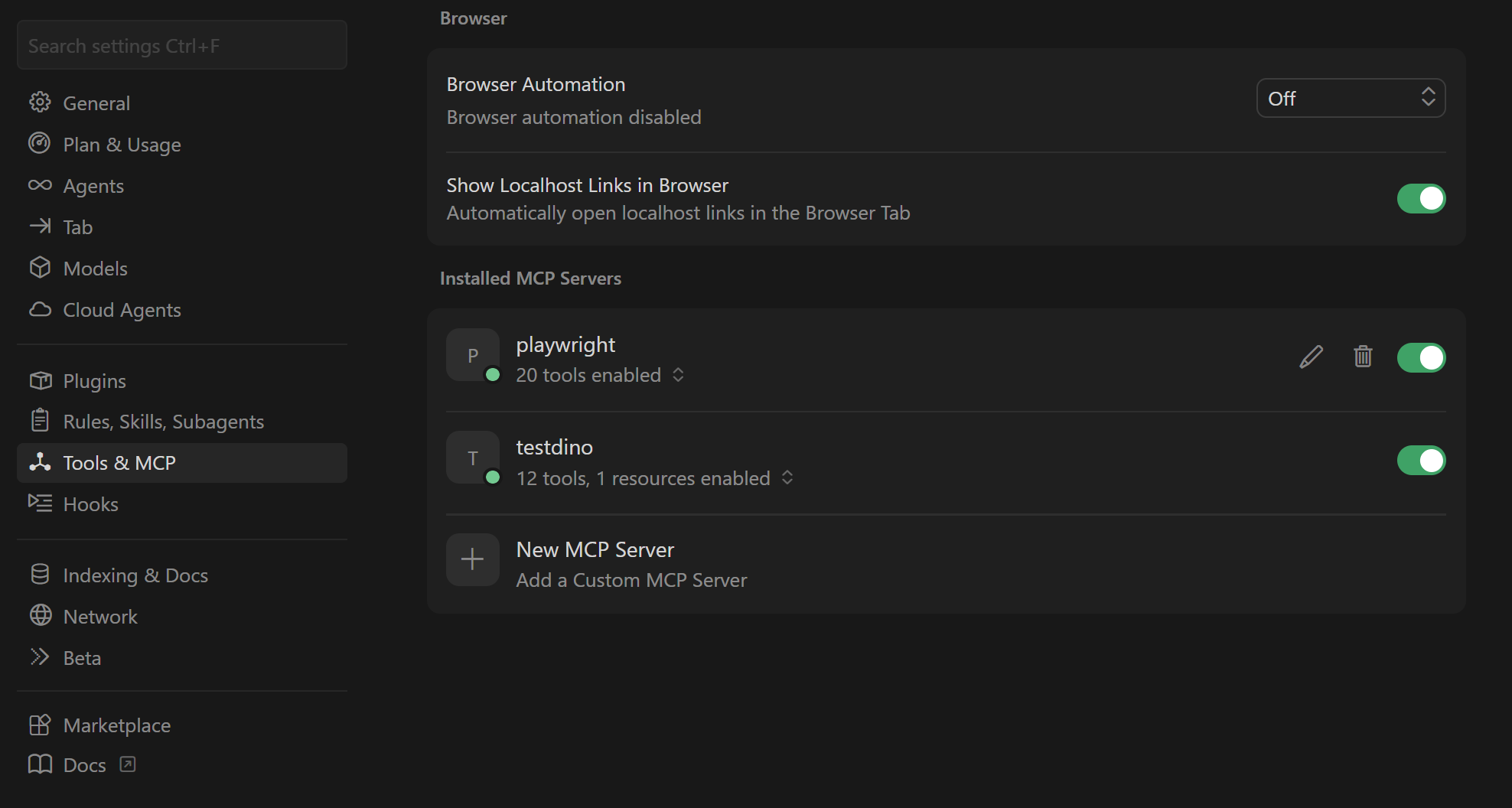

TestDino has its own MCP that can automate tasks directly on TestDino from IDE agents

Install TestDino MCP in Cursor

Generate a Personal Access Token (PAT) from your TestDino account. Go to User Settings > Personal Access Tokens and create one. This PAT gives access to all organizations and projects you have permissions for.

Open or create your MCP config file:

-

macOS/Linux: ~/.cursor/mcp.json

-

Windows: %APPDATA%\Cursor\mcp.json

-

Project-specific: .cursor/mcp.json in your project root

Add the TestDino server:

{

"mcpServers": {

"TestDino": {

"command": "npx",

"args": ["-y", "testdino-mcp"],

"env": {

"TESTDINO_PAT": "your-token-here"

}

}

}

}

Step -1: Add test cases to TestDino test management

TestDino's Test Case Management tab is a standalone workspace where teams create, organize, and maintain all their manual and automated test cases within a project. As you generate tests with Cursor, you can track them inside TestDino to keep your coverage organized.

You can now simply use this prompt to store your test on TestDino

Great, now you have generated this test case, can you to store this test case with steps(english language) in TestDino test management.

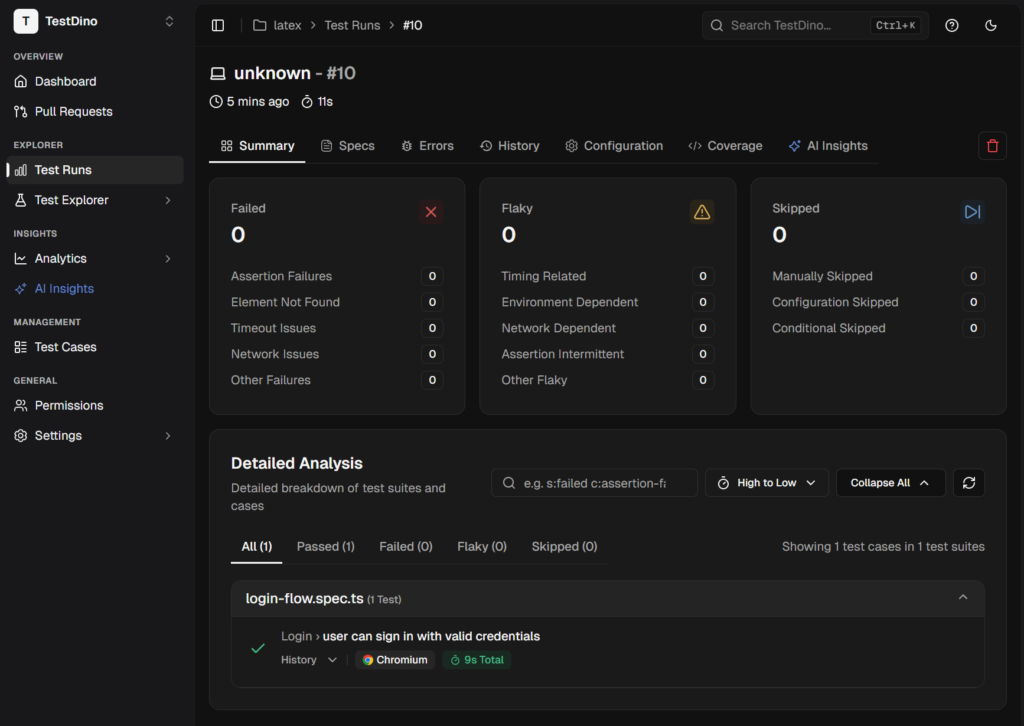

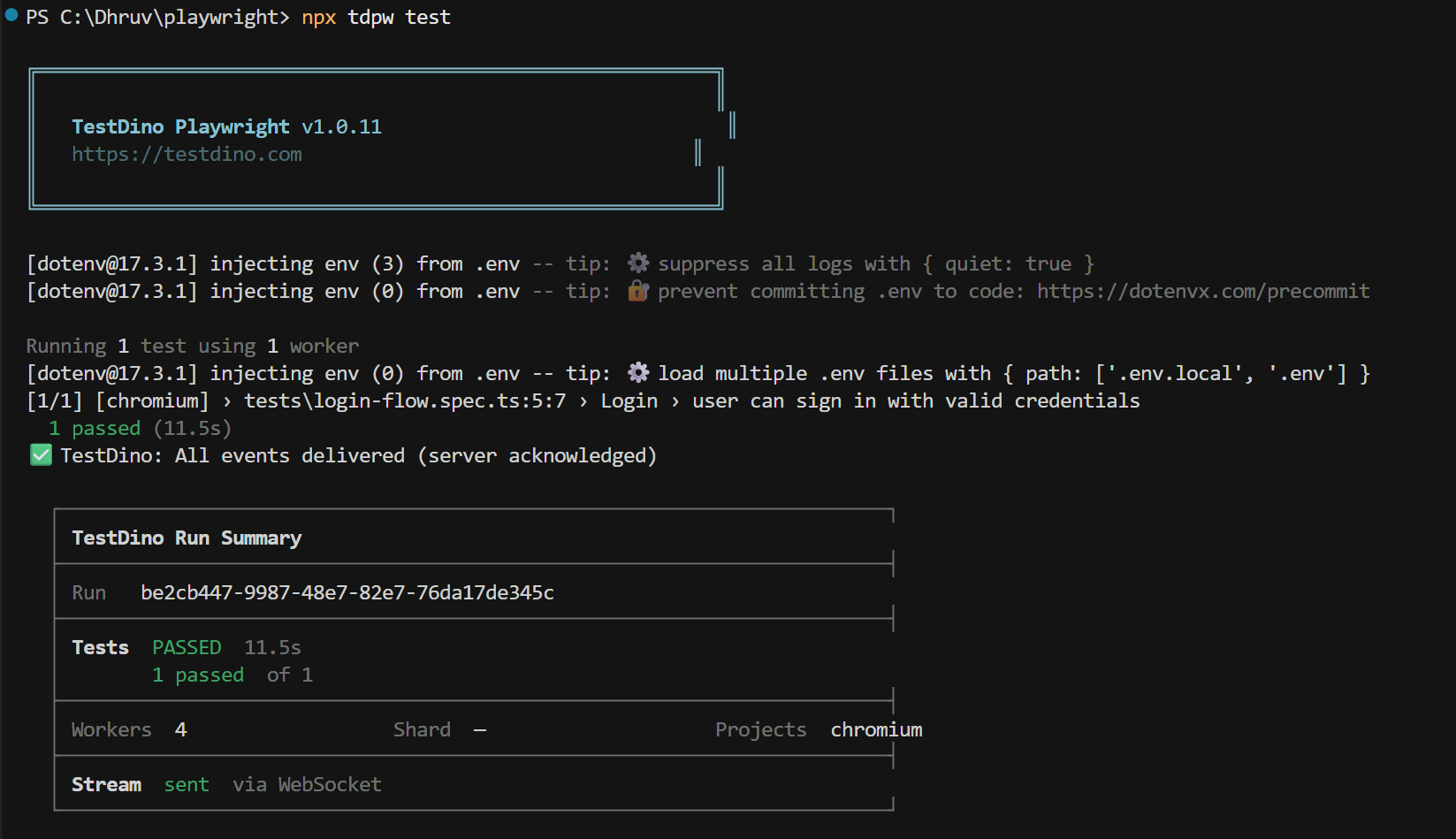

Step -2: Run with npx tdpw test

The fastest way to get results into TestDino is the tdpw CLI. Install it once:

npm install @testdino/playwright

Then run using this command;

npx tdpw test --token "your_token_here"

If you don't want to pass the token again and again store it in your environment.

Add token to your .env file:

TESTDINO_TOKEN="your_token_here"

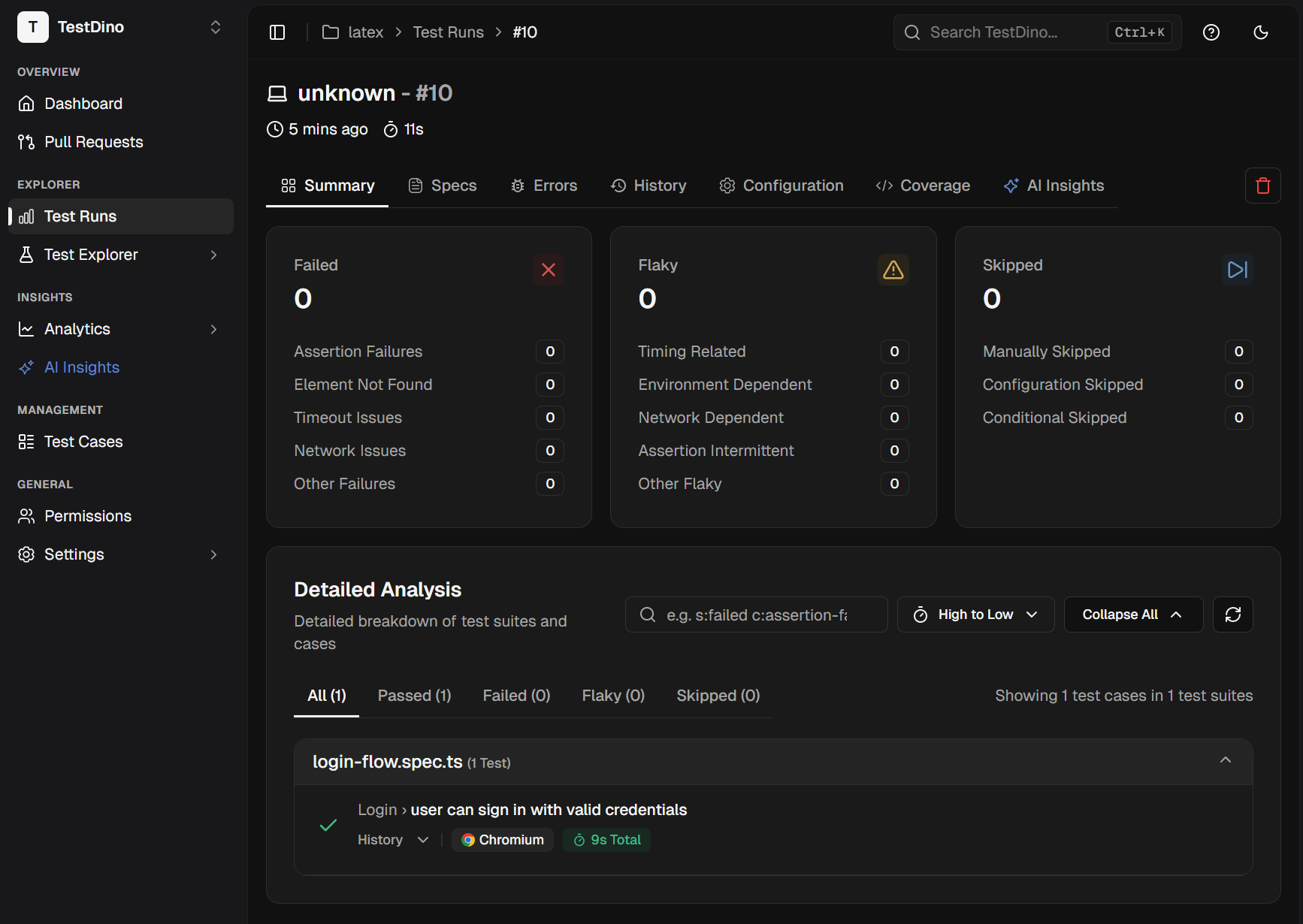

Now when you run npx tdpw test, you get reports in 2 parts:

1. Terminal report (instant feedback): You see pass or fail status, execution time, and run summary directly in your terminal.

2. TestDino dashboard (full analysis): Results are sent to the TestDino web app with detailed insights, history, and failure classification.

Every Playwright CLI flag works the same: --project, --grep, --workers, --shard, --headed. Nothing changes except results now stream to the TestDino dashboard in real time via WebSocket.

For CI environments, add the upload step after your test run:

- name: Run Playwright Tests

run: npx playwright test

- name: Upload to TestDino

if: always()

run: npx tdpw upload ./playwright-report --token="${{ secrets.TESTDINO_TOKEN }}" --upload-html

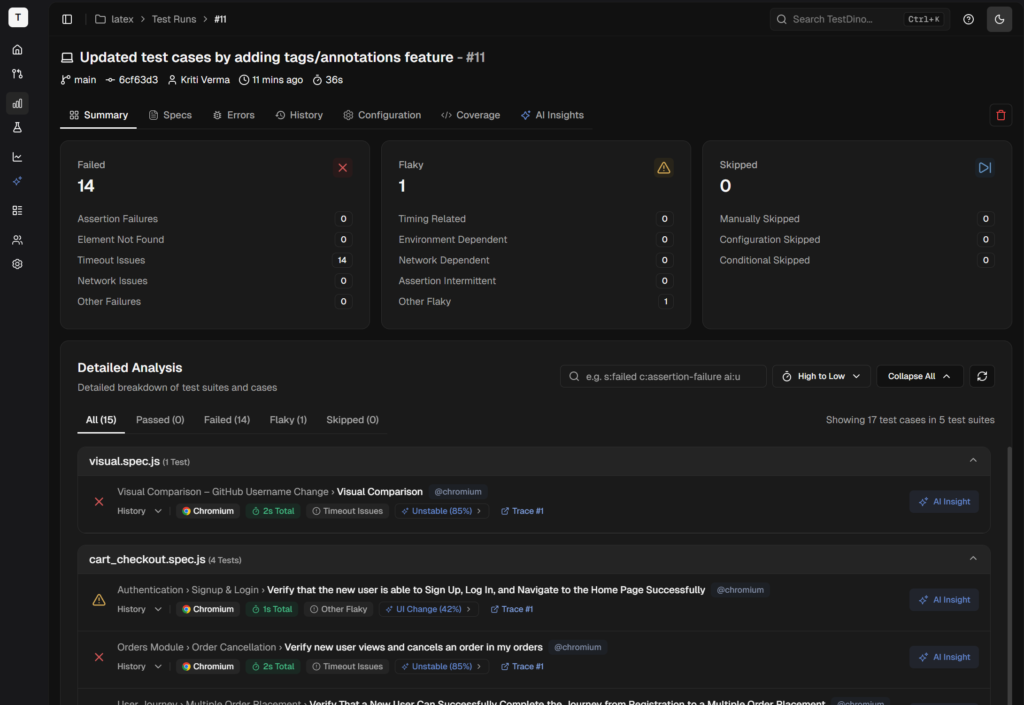

Real-time streaming, evidence panel, suite history

Results appear as tests finish, not after the entire suite completes. AI categorizes every failure as Bug, Flaky, or UI Change with confidence scores. Screenshots, traces, and videos are all accessible from the same dashboard.

Your team sees exactly what broke, when it started breaking, and whether it is a one-time failure or a recurring pattern. That context is what turns raw test output into actionable information.

Fix flaky tests with TestDino MCP and Cursor

This is where the full workflow comes together. Flaky tests are the number one productivity killer in test automation. A test that passes sometimes and fails other times wastes hours of debugging time because the failure is not consistent enough to reproduce easily.

Playwright 1.56 introduced test agents, including the Healer agent that automatically repairs failing tests. But the Healer has a blind spot: it only sees the current UI state. It cannot tell if a test has been flaky for two weeks or if this is a brand-new regression. That is where TestDino MCP fills the gap.

1. Install TestDino MCP in Cursor (JSON config)

If you completed Store tests and ran CI reports with the TestDino section above, you may already know how to set up TestDino MCP. Otherwise, refer to our docs on MCP

2. Ask Cursor agent to find flaky tests from TestDino

TestDino MCP exposes 12 tools that let you query your test data using natural language directly inside Cursor. You can ask things like:

-

"What are the failure patterns for the checkout flow test?"

-

"Is this test flaky? Show me the last 10 runs."

-

"Debug 'Verify User Can Complete Checkout' from testdino reports"

-

"Which tests failed on webkit but passed on chromium this week?"

3. Ask Cursor agent to fix that flaky test flaky tests from TestDino

You can ask the Cursor agent to "fix abc.spec.ts using error context from Testdino, leveraging Testdino MCP to fetch data and apply fixes from the report."

Real workflow: flaky test detected, ask TestDino MCP, feed patterns to Healer, Healer fixes and reruns, test passes

Here is how the full loop works in practice:

- CI reports a failing test. TestDino classifies it as "Flaky" with 85% confidence.

- In Cursor, ask TestDino MCP: "Why is the checkout-flow test failing? Show me the failure patterns from the last 20 runs."

- TestDino MCP returns: "Fails on webkit 6 out of 20 runs. Error: element not visible. Timing issue on the payment form animation."

- Feed this context to the Healer agent: "Fix the checkout-flow test. It is flaky on webkit due to a timing issue with the payment form animation. Use Playwright MCP tools to inspect the page and fix the wait strategy."

- The Healer runs the test in debug mode, identifies the animation causing the issue, adds a proper wait condition, and re-runs until the test passes.

- Review the diff. Commit.

Why this matters

Without TestDino's historical failure data, the Healer only sees the current UI. It can patch a selector or add a wait, but it cannot tell if the test has been intermittently failing for weeks. It does not know if the failure is browser-specific. It does not have access to the stack traces from previous runs.

TestDino MCP gives the Healer the "memory" it needs to make informed decisions instead of applying blind patches. The result is fixes that actually stick, not band-aids that pass once and break again tomorrow.

Cursor vs other AI agents (2026)

Cursor is not the only option for AI-assisted Playwright test writing. Here is how it stacks up against the main alternatives in 2026.

| Feature | Cursor | Claude Code | GitHub Copilot | Windsurf |

|---|---|---|---|---|

| MCP support | Yes (plugin system) | Yes (deep, per-agent) | Yes (via VS Code) | Yes (marketplace) |

| Multi-model | Yes (OpenAI, Anthropic, Gemini, Cursor) | Anthropic only | OpenAI primarily | Limited |

| .rules files | .cursorrules | CLAUDE.md | .github/copilot-instructions.md | Cascade rules |

| Tab completion | Yes (Supermaven) | No | Yes | Yes |

| Playwright Skills | Supported | Supported | Supported | Supported |

| Visual diffs | Inline in editor | Terminal-based | Inline in VS Code | Inline in editor |

| Best for | Interactive IDE workflow | Terminal-heavy, deep refactors | Teams already on VS Code | Teams wanting guided flows |

The main advantage of Cursor for Playwright testing is the combination of MCP, rules, multi-model switching, and visual diff review. You can generate a test, switch models to verify it, run it through MCP to validate against the real browser, and review the diff inline, all without leaving the editor.

Claude Code is stronger for large-scale refactors and has deeper MCP integration. GitHub Copilot works if your team is already invested in the VS Code ecosystem and prefers not to switch editors. Windsurf offers a more guided experience but has a smaller community.

For teams focused specifically on Playwright test generation at scale, Cursor plus TestDino gives you the tightest loop: generate, run, report, fix, repeat.

FAQs

Table of content

Flaky tests killing your velocity?

TestDino auto-detects flakiness, categorizes root causes, tracks patterns over time.