Best 8 TestMu AI Alternatives for Playwright Teams

TestMu AI bundles analytics with a cloud execution grid. For focused Playwright test intelligence without paying for infrastructure you don't use, start with TestDino.

TestMu AI is a cloud testing platform that provides browser and device infrastructure for running automated tests. Test analytics, flaky test detection, and session recordings are included with the platform.

The challenge for Playwright teams is that the analytics layer is built around the execution grid. If your tests already run on GitHub Actions, GitLab CI, or Azure DevOps, you are paying for infrastructure you do not use to access reporting features that are secondary to the platform's core value.

Teams looking for Playwright-specific failure intelligence, test management, and CI/CD optimization are exploring TestMu AI alternatives that treat test reporting as the primary product, not an add-on to a cloud grid.

Here are the 8 best TestMu AI alternatives to consider in 2026.

Best TestMu AI Alternatives: How to Choose the Right Tool

We carefully researched and evaluated each platform to compile this list of TestMu AI alternatives, assessing key factors such as ease of onboarding, depth of test analytics, support for Playwright automation, seamless CI/CD integration, and AI-powered debugging features, including flaky test detection and failure grouping.

We also consider scalability for enterprise teams, pricing transparency, and compatibility with multiple frameworks and browsers, ensuring these handpicked TestMu AI competitors help CTOs, QA leads, product managers, and engineering directors choose the right solution for smarter, more efficient testing.

How to Compare TestMu AI Alternatives

Here is a quick comparison of the top alternatives to TestMu AI that can help you identify your preferred test reporting tool:

TestDino

|

TestMu AI

|

BrowserStack Test Reporting

|

Datadog Test Optimization

|

ReportPortal

|

|

|---|---|---|---|---|---|

| Pricing (starts at) | $49/month | $159/month (billed annually) | Free / $299/month (Pro) | Per-committer (usage-based) | $599/month (SaaS) |

| Best for | Playwright test intelligence & management | Cross-browser cloud test execution | Multi-framework test analytics | Teams monitoring CI inside Datadog | Self-hosted open-source reporting |

| Framework support | Playwright | Playwright & More | Multi-framework (via SDK) | Playwright & More | Playwright & More |

| Ease of use | |||||

| Getting Started |

|||||

| Reporting & Dashboards |

|||||

| Debugging & Evidence |

|||||

| AI Test Intelligence |

|||||

| CI/CD Optimization |

|||||

| Test Management & Integrations |

|||||

| Pricing |

|||||

| Try for FREE | |||||

Best TestMu AI Competitors for Playwright Test Reporting

Here are the top 8 best alternatives to TestMu AI for teams that want focused test reporting:

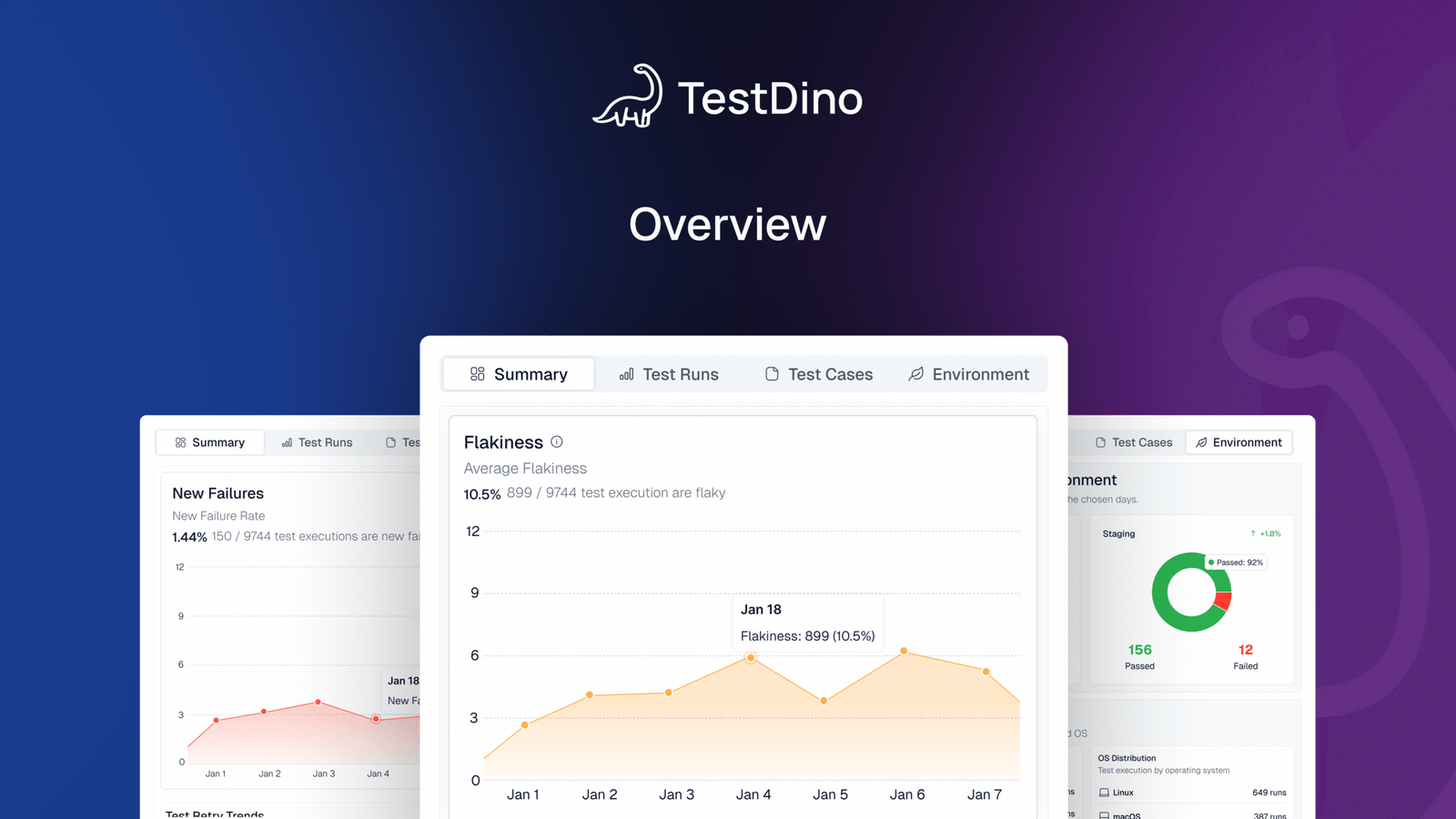

1. TestDino

$49 /month

Best for:

Playwright-first teams that need test reporting, test management, and CI/CD optimization in one platform, without stitching multiple tools together.

Platform Type:

Test reporting, dashboards, test management, and CI observability platform for Playwright

Integrations with:

GitHub Actions, GitLab CI, Azure DevOps, TeamCity, Jira, Linear, Asana, monday, Slack

Key Features:

-

Test management and automated reporting in one place

-

AI failure classification into 4 categories

-

Built-in trace viewer with DOM snapshots and network logs

-

Error grouping by message and stack trace

-

GitHub CI Checks as merge quality gates

-

Rerun only failed tests to cut CI pipeline time

-

MCP Server for AI agent queries from your IDE

-

Flaky test detection across run history

-

AI summaries posted to GitHub commits

-

Real-time results streaming via WebSocket

-

Code coverage per file breakdown

Pros

-

Playwright-native with under 10-minute setup

-

Test management and automated reporting on the same platform

-

Broad CI/CD support: GitHub Actions, GitLab CI, Azure DevOps, TeamCity

-

AI summaries posted to GitHub commits, GitLab MRs, and Slack

-

1-click bug filing into Jira, Linear, Asana, or monday

-

Affordable at $39/month billed annually

Cons

-

Purpose-built for Playwright (multi-framework support on the roadmap)

First Hand Experience

TestMu AI packages test analytics alongside a cloud execution grid. Teams running Playwright on GitHub Actions or GitLab CI often evaluate it for the reporting features, but find they are paying for execution infrastructure they do not use. The analytics show pass/fail summaries, session recordings, and flaky test flags, but the deeper intelligence that Playwright teams need, like failure classification and error grouping, is not the platform's focus.

TestDino is built entirely around Playwright test intelligence. There is no execution grid. Instead, results flow in from whatever CI you already use, and the platform focuses on what happens after the run: AI failure classification, error grouping, flaky detection with root cause categories, and test management.

Test management and automated reporting live on the same platform. Manual test cases sit in suites up to 6 levels deep with ownership, custom fields, and version history. The Test Explorer shows both manual and automated tests side by side, sortable by flaky rate, tags, and coverage status.

Debugging That Saves You from Re-running Locally

Each failed test in TestDino comes with screenshots, video, browser console logs, and a trace you can step through action by action. Available right after the CI run finishes.

AI Insights classifies each failure as Actual Bug, UI Change, Unstable Test, or Miscellaneous. Bug filing is 1-click into Jira, Linear, Asana, or monday, pre-filled with error details, stack trace, failure history, and links to the run and CI job.

CI/CD Speed and Merge Safety

Rerun failed tests re-executes only failures, not the full suite. Works across sharded runs and different CI runners.

GitHub CI Checks adds quality gates to your PRs. Set a minimum pass rate, mark critical tags as mandatory, and configure different rules per environment. AI-generated summaries are posted to GitHub commits and GitLab merge requests with pass/fail/flaky counts.

Flaky Test Detection That Tells You Why

Flaky test detection classifies unstable tests by root cause: timing-related, environment-dependent, network-dependent, or assertion-intermittent. Each test gets a stability percentage, and you can compare flaky rates across environments to spot infrastructure problems.

Real-Time Streaming and Scheduled Report

Results appear on the dashboard as each test completes via real-time streaming, not after the full suite finishes. Automated PDF reports deliver test health summaries on daily, weekly, or monthly schedules. Slack notifications send run summaries filtered by environment and branch.

MCP Server for AI-Assisted Workflows

The MCP Server connects your AI assistant (Cursor, Claude Code, Copilot) to your test data. List test runs, pull debugging context, perform root cause analysis, and manage manual test cases through natural language. It covers both automated debugging and test management without switching tools.

Pricing & Value

| Community | Pro Plan | Team Plan | Enterprise |

|---|---|---|---|

| Free | $39 /month

(billed annually) |

$79 /month

(billed annually) |

Custom |

Pricing may vary. Check the pricing page for the latest details.

Final Verdict

TestDino is a strong alternative to TestMu due to its affordable pricing, faster onboarding, and native Playwright support, for teams that want reporting without an execution grid.

Where TestMu AI bundles analytics with cloud browser infrastructure starting at $159/month, TestDino provides deeper Playwright-specific intelligence, including AI failure classification, error grouping, trace viewing, and test management at $39/month billed annually.

If your tests already run on GitHub Actions, GitLab CI, or Azure DevOps, you do not need to pay for a separate execution platform to get test analytics. TestDino works with the CI infrastructure you already have.

2. BrowserStack Test Reporting & Analytics

Best for:

Teams that want multi-framework test analytics with AI failure tagging.

Platform Type:

Test analytics platform

Integrations with:

Jira, CI/CD tools, Slack

Key Features:

-

AI-based failure reason categorization

-

Flaky test detection with smart tags

-

Timeline debugging with consolidated logs

-

Custom dashboards with widgets (Pro)

-

Build verification rules for CI gates

Pros

-

AI failure tagging across test frameworks

-

Flaky detection with smart tags

-

Works standalone or with BrowserStack execution

Cons

-

Pro tier starts at $299/month

-

No test case management built in

-

SDK integration required per framework

First Hand Experience

BrowserStack Test Reporting provides failure categorization, flaky detection, and timeline debugging across test frameworks. It works with or without BrowserStack execution infrastructure. The Pro tier at $299/month adds custom dashboards and quality gates. Teams that need test management or Playwright-specific trace viewing may find the analytics focused on broad multi-framework coverage rather than Playwright depth. For teams weighing TestMu AI vs BrowserStack, both provide cross-browser coverage, but BrowserStack's analytics layer runs independently of its execution grid.

Pricing & Value

Free tier with 30-day retention. Pro starts at $299/month billed annually.

Final Verdict

BrowserStack Test Reporting is a capable multi-framework analytics tool. For Playwright-focused teams, the $299/month cost and SDK-per-framework setup may not match the depth of purpose-built platforms at lower price points.

3. Datadog Test Optimization

DataDog Alternative, DataDog Review, DataDog Comparison, DataDog vs TestDino

Best for:

Teams already using Datadog for system monitoring who want test run visibility in the same dashboard.

Platform Type:

CI pipeline monitoring with test analytics add-on

Integrations with:

CI/CD, Slack, Jira, PagerDuty

Key Features:

-

Test run visibility inside CI pipeline views

-

Flaky test detection and tracking

-

Custom dashboards and alert rules

-

Test execution tracing with flame graphs

-

CI pipeline performance metrics

Pros

-

Fits well if Datadog is already your monitoring tool

-

Flaky test detection is mature

-

Good CI pipeline-level visibility

Cons

-

Built for system monitoring, not test reporting

-

QA teams find the interface complex and broad

-

Costs grow with data ingestion and retention

First Hand Experience

Datadog Test Optimization adds test analytics to an existing monitoring stack. It works best when your team already uses Datadog for infrastructure and wants test data in the same place. QA engineers navigate through system monitoring interfaces to reach test-specific insights. Teams looking for focused test reporting will need to pair it with a separate tool.

Pricing & Value

Per-committer, usage-based pricing starts at $20/month/committer. Costs are hard to predict as test artifacts and logs scale. Test spans are retained for 3 months.

Final Verdict

Datadog fits teams already using it for system monitoring. For QA-led teams looking for focused test reporting and management, purpose-built platforms offer a more direct path.

4. ReportPortal

Best for:

Teams that want self-hosted, open-source test reporting with ML-based failure pattern matching.

Platform Type:

Open-source test reporting platform

Integrations with:

Jenkins, GitHub, GitLab, Jira, Rally

Key Features:

-

ML-based pattern matching for failure clustering

-

Custom dashboard widgets for run data

-

Multi-framework result aggregation

-

Self-hosted with full data control

-

Launch-level run history

Pros

-

Open source with self-hosting option

-

Supports many test frameworks

-

Persistent history across launches

Cons

-

Setup requires Docker Compose and maintenance

-

SaaS starts at $599/month

-

Limited Playwright-specific debugging features

First Hand Experience

ReportPortal aggregates test results from multiple frameworks and uses ML-based pattern matching to identify recurring failure clusters. The self-hosted option gives full data control. Setup requires Docker Compose, database configuration, and ongoing infrastructure maintenance. Teams looking for managed platforms with quick onboarding may find the operational overhead significant. As a free alternative to TestMu AI for open source reporting, ReportPortal offers managed hosting in exchange for data control.Pricing & Value

Free (open source, self-hosted). SaaS starts at $599/month for the Startup tier.

Final Verdict

ReportPortal fits teams that want open-source self-hosting with ML-based failure analysis. For teams that prefer managed platforms with Playwright-specific intelligence, simpler options exist.

5. Currents

Currents Alternative, Currents Review, Currents Comparison, Currents vs TestDino

Best for:

Teams that want to stream Playwright test runs live in the cloud.

Platform Type:

Cloud dashboard for test execution streaming

Integrations with:

GitHub, GitLab, Slack

Key Features:

-

Live test run streaming during CI

-

Orchestration for test sharding

-

CI/CD pipeline integrations

-

Trace viewer and screenshots

-

Flaky test detection and quarantine

Pros

-

Real-time visibility during execution

-

Simple cloud-first setup

-

Playwright trace viewer included

Cons

-

Limited analytics depth beyond execution

-

No test case management

-

Usage costs scale with test volume

First Hand Experience

Currents delivers live streaming for Playwright runs, useful during active releases. Day-to-day, the focus stays on execution monitoring. Teams that require test case management, failure classification, or historical analytics may find they need additional tooling alongside Currents.

Pricing & Value

Usage-based pricing starting at $49/month. Costs rise with run frequency and the number of artifacts.

Final Verdict

Currents is a good fit for teams prioritizing real-time visibility into execution. For teams that need test management and deeper failure analysis alongside streaming, evaluate whether an execution-focused tool meets your full needs.

6. Allure TestOps

Best for:

QA teams with formal test management processes that need structured reporting workflows.

Platform Type:

Test management and reporting platform

Integrations with:

Jira, GitHub, GitLab, Jenkins

Key Features:

-

Test case organization with launch history

-

CI/CD adapter integrations

-

Configurable dashboards via AQL queries

-

Access control and permissions

-

Report exports and sharing

Pros

-

Established feature set for structured QA

-

Works across multiple test frameworks

-

Configurable dashboards and reports

Cons

-

Setup and adapter configuration require effort

-

Smaller teams may find the overhead heavy

-

Reporting requires manual dashboard building

First Hand Experience

Allure TestOps provides a structured workspace for organizing test cases and viewing launch results. The platform works best when teams have defined QA processes and the bandwidth to set up adapters and configure dashboards. Teams looking for faster onboarding and built-in failure intelligence may find the configuration effort slows time-to-value.

Pricing & Value

Custom pricing. Targets teams that need formalized test management with governance.

Final Verdict

Allure TestOps fits teams that follow structured QA processes. For teams prioritizing fast setup and focused test analytics, lighter platforms get to value faster.

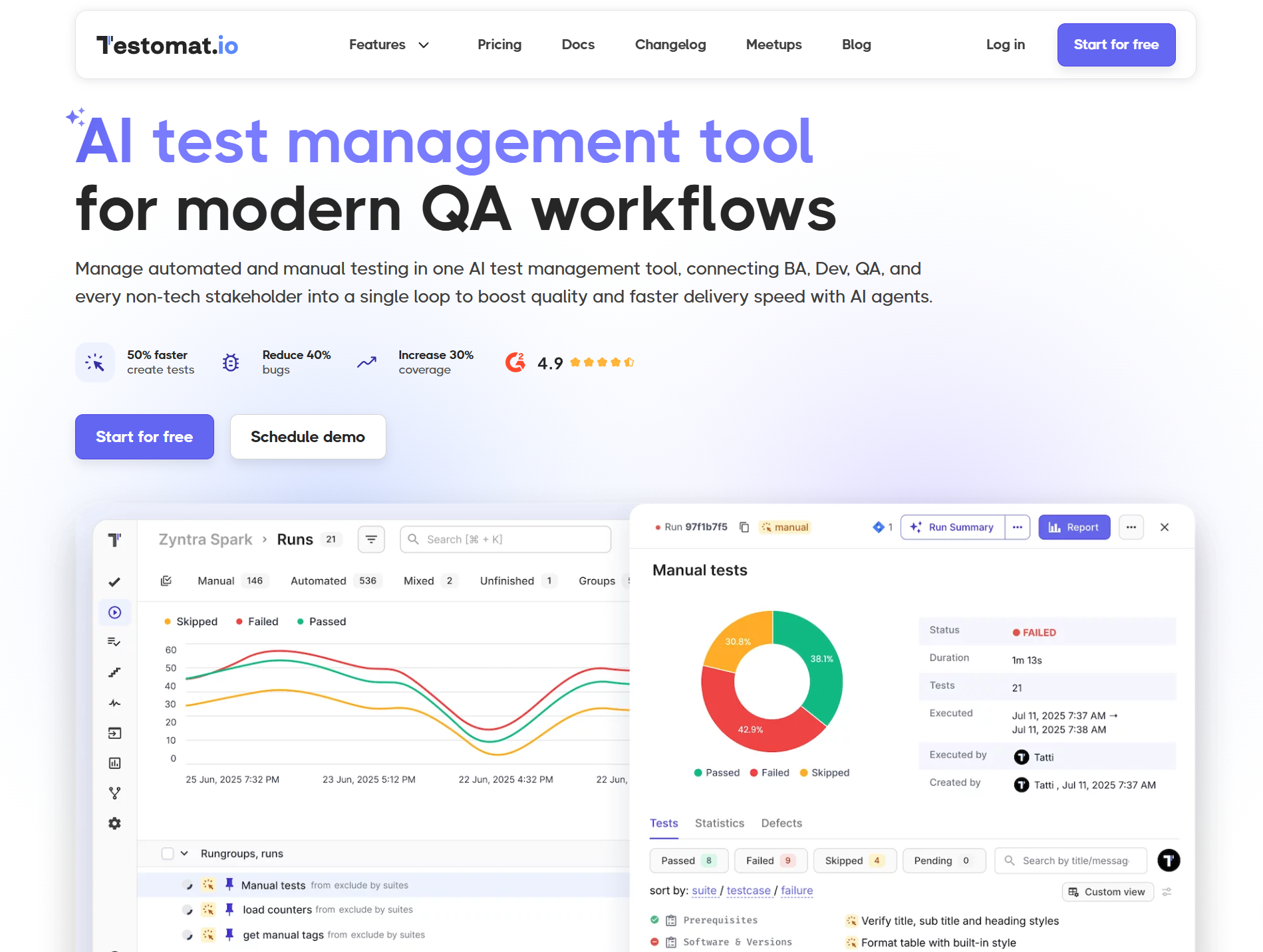

7. Testomat.io

Best for:

QA teams syncing manual and automated tests in one workspace.

Platform Type:

Cloud test management platform

Integrations with:

Jira, GitHub, GitLab, Jenkins, CI/CD pipelines

Key Features:

-

Manual and automated test case management

-

Real-time run results with heatmaps

-

Flaky test auto-tagging from run history

-

BDD/Gherkin support with living docs

-

CI/CD triggered test execution

Pros

-

Clean UI with fast onboarding

-

Affordable pricing for small teams

-

Good automation framework integrations

Cons

-

Limited failure analysis and root cause depth

-

Reporting focused on test case status

-

No built-in trace viewer or evidence panel

First Hand Experience

Testomat.io organizes manual and automated tests in a clean workspace with folder structures, tags, and run history. It integrates with Playwright, Cypress, and other frameworks through a CLI reporter. Flaky tests get auto-tagged based on run history. For teams that need structured test management with basic run reporting, it covers the fundamentals well.

Pricing & Value

Starts at $30/month with a free tier for small teams.

Final Verdict

Testomat.io is a solid option for teams that need clean test case management with automation sync. For teams focused on failure analysis and Playwright-specific debugging, evaluate whether test case management alone meets your reporting needs.

8. Allure Report

Allure Report Alternatives, Allure Report Review, Allure Report Comparison, Allure Report vs TestDino

Best for:

Teams that need a free, single-run HTML report to share test results without a managed service.

Platform Type:

Static HTML report generator (open source)

Integrations with:

Playwright, Pytest, JUnit, TestNG, Jest, and more

Key Features:

-

Interactive HTML test reports per run

-

Framework-agnostic adapters

-

Hierarchical suites and test views

-

Attachments for logs and screenshots

Pros

-

Free and open source

-

Clear single-run visualization

-

Works across many frameworks

Cons

-

Stateless with no persistent history

-

No test case management or collaboration

-

Operational overhead grows at scale

First Hand Experience

Allure Report converts raw results into interactive HTML for a single run. It is not a test analytics or management platform. Because reports are static files, teams build custom CI steps, storage, and retention logic to keep any form of history. Engineering time for adapters, hosting, and history wiring adds up over time.

Pricing & Value

Software cost is zero. Total cost of ownership grows with pipelines, storage, and maintenance.

Final Verdict

Allure Report works well as a single-run visualizer. Teams that require persistent analytics, test case management, or failure intelligence should evaluate managed platforms that provide these out of the box.

What to look for in a TestMu AI replacement

TestMu AI combines cloud execution with test analytics. The question is whether you need both or just the analytics. If your CI already handles execution, here is what matters in a Playwright test reporting tool.

Reporting depth beyond session summaries

Execution platforms show session-level data: pass/fail, screenshots, video, and logs. Test reporting platforms go deeper with AI failure classification, error grouping, flaky detection with root cause categories, and trend analytics across hundreds of runs.

If your triage process requires clicking through individual sessions, the reporting layer is too shallow. TestMu AI alternatives with better test reporting provide test-level intelligence rather than session-level summaries.

Test management without a separate subscription

If your reporting tool does not include test case management, you need a separate tool for tracking manual test cases, ownership, and coverage. That means maintaining two subscriptions, two interfaces, and an integration layer between them.

Platforms that integrate test management with analytics reduce overhead and provide a single source of truth. For teams evaluating the best LambdaTest alternatives for CI/CD integration, the fewer tools in your stack, the less maintenance you carry.

Playwright-specific debugging

Session recordings work for any framework. Playwright teams also need trace viewing with DOM state per action, console log capture per test, and network request timelines.

Generic execution platforms provide visual regression testing and recordings. Playwright-native platforms provide the debugging depth needed to reduce time-to-fix. TestMu AI alternatives with built-in analytics should include a trace viewer and error grouping, not just pass/fail dashboards.

CI/CD integration that works with your existing infrastructure

If your tests already run on GitHub Actions, GitLab CI, or Azure DevOps, you should not need a separate execution platform to get test analytics. Look for reporting tools that integrate with your existing CI/CD integration and provide value from the first run without changing your infrastructure.

This is the core distinction between cloud testing platform alternatives that bundle execution with analytics and reporting platforms that focus purely on intelligence.

Pricing that reflects what you actually use

Execution platforms charge for browser minutes, parallel test execution, and infrastructure access. TestMu AI pricing starts at $159/month, billed annually for the full platform. If you only need analytics, you are paying for capabilities you do not use.

Flat monthly pricing for reporting and analytics lets you budget for the features that matter without subsidizing an execution grid. For cheaper alternatives to TestMu AI for small teams, compare reporting-only pricing against bundled execution pricing.

Wrapping Up

TestMu AI provides a cloud-based execution grid with test analytics and flaky-detection capabilities as part of the platform. For teams that need cross-browser cloud execution, the analytics add value alongside the infrastructure.

BrowserStack Test Reporting offers multi-framework analytics at $299/month. Datadog adds test visibility to system monitoring. ReportPortal provides self-hosted ML-based reporting. Currents streams Playwright runs in real time. Allure TestOps targets structured QA processes. Testomat.io offers clean test management. Allure Report generates free single-run HTML.

For Playwright-first teams that want AI failure classification, test management, flaky detection, and CI/CD optimization without paying for execution infrastructure, TestDino combines test intelligence, management, and reporting at $39/month billed annually.

Table of content

Flaky tests killing your velocity?

TestDino auto-detects flakiness, categorizes root causes, tracks patterns over time.