Deep Dive into Playwright CLI: Token Efficient Browser Automation

Learn how Playwright CLI powers token-efficient browser automation, reducing overhead while delivering fast, reliable, and scalable end-to-end testing.

I have been playing with the Claude code lately, and something interesting has changed in Playwright ecosystem. There’s now an AI-native command line: playwright-cli

Unlike traditional tooling or MCP servers, this CLI is built specifically for coding agents that need to drive real browsers without overwhelming their context window or burning through token budgets (which is huge concerns on MCP servers).

Instead of streaming entire DOMs, accessibility trees, and verbose logs back to the model at every step, it sends minimal, structured signals, snapshots, element references, and YAML flows, that agents can reliably replay and convert into tests.

In this guide, I've mentioned how to use playwright-cli as the control surface for AI agents to explore applications, automate realistic user journeys, and promote those flows into clean, maintainable Playwright tests and CI pipelines.

What is the Playwright CLI?

The Playwright CLI is the command-line interface that ships with Playwright. It handles test execution, browser installation, code generation, debugging, and more. Most teams interact with Playwright through this CLI rather than writing custom scripts.

But here's something that trips people up: there are actually two different CLIs you might encounter:

Both serve different purposes. Let's break them down.

The standard Playwright test CLI

This is what you'll use 95% of the time. Every Playwright project uses these commands.

Installation and setup

# Run all tests

npx playwright test

# Run a single test file

npx playwright test tests/login.spec.ts

# Run tests in a directory

npx playwright test tests/e2e/

# Run tests by title

npx playwright test -g "should add item to cart"

Running tests

The npx playwright test command is your workhorse:

# Run all tests

npx playwright test

# Run a single test file

npx playwright test tests/login.spec.ts

# Run tests in a directory

npx playwright test tests/e2e/

# Run tests by title

npx playwright test -g "should add item to cart"

Browser and project selection

# Run in headed mode (see the browser)

npx playwright test --headed

# Run in a specific browser

npx playwright test --project=chromium

npx playwright test --project=firefox

npx playwright test --project=webkit

# Run in all browsers

npx playwright test --browser=all

# Run multiple projects

npx playwright test --project=chromium --project=firefox

Reporting and output

# Use a specific reporter

npx playwright test --reporter=dot

npx playwright test --reporter=html

# Multiple reporters

npx playwright test --reporter=list,html

# Upload results to TestDino for AI-powered analysis

npx tdpw upload ./playwright-report --token="YOUR_API_KEY"

That last command sends your Playwright results to TestDino, where AI categorizes failures and tracks patterns across runs. More useful than staring at raw HTML reports when you have more than 50 e2e tests.

The new microsoft playwright-cli

Here's where things get interesting. Microsoft released a separate CLI tool specifically designed for AI coding agents. You can find it at GitHub-microsoft/playwright-cli

Why a separate CLI?

MCP browser tools have a core issue: the server controls what enters your context. With Playwright MCP, every response includes the full accessibility tree and console messages. After a few page queries, your context window fills up, causing degraded performance, lost context, and higher costs.

Modern coding agents (Claude Code, GitHub Copilot, etc.) increasingly prefer CLI-based workflows. The reasoning is simple: CLI invocations are more token-efficient than MCP (Model Context Protocol) because they avoid loading large tool schemas and verbose accessibility trees into the model context.

This makes the CLI better suited for high-throughput coding agents that must balance browser automation with large codebases, tests, and reasoning within limited context windows.

Installation

npm install -g @playwright/cli@latestplaywright-cli --help

Core commands

The playwright-cli gives AI agents (or you) a way to control browsers interactively:

# Open a URL

playwright-cli open https://xyz.com/ --headed

# Type text

playwright-cli type "Hello World"

# Press keys

playwright-cli press Enter

# Click an element (using ref from snapshot)

playwright-cli click e21

# Fill a form field

playwright-cli fill e15 "[email protected]"

# Take a screenshot

playwright-cli screenshot

# Get page snapshot for element references

playwright-cli snapshot

# Close the browser

playwright-cli close

A practical playwright-cli example (End-to-End)

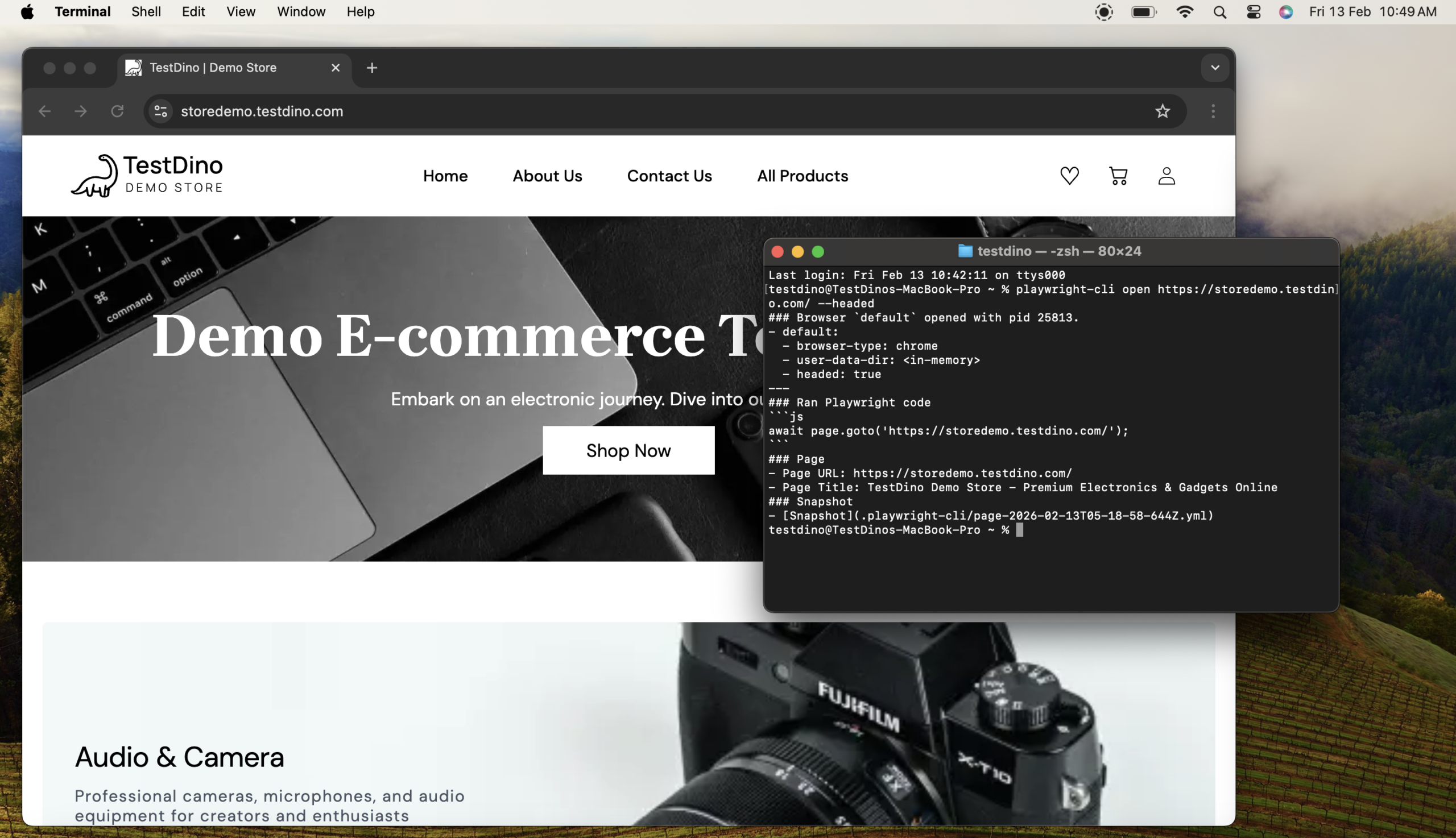

To understand how the Microsoft playwright-cli works in practice, let’s walk through a simple but realistic shopping flow. This example shows how a developer can interact with a browser using minimal context and highly deterministic commands.

# Open the demo store in a visible (headed) browser

playwright-cli open https://storedemo.testdino.com/ --headed

# Capture the current page state and generate element reference IDs

playwright-cli snapshot

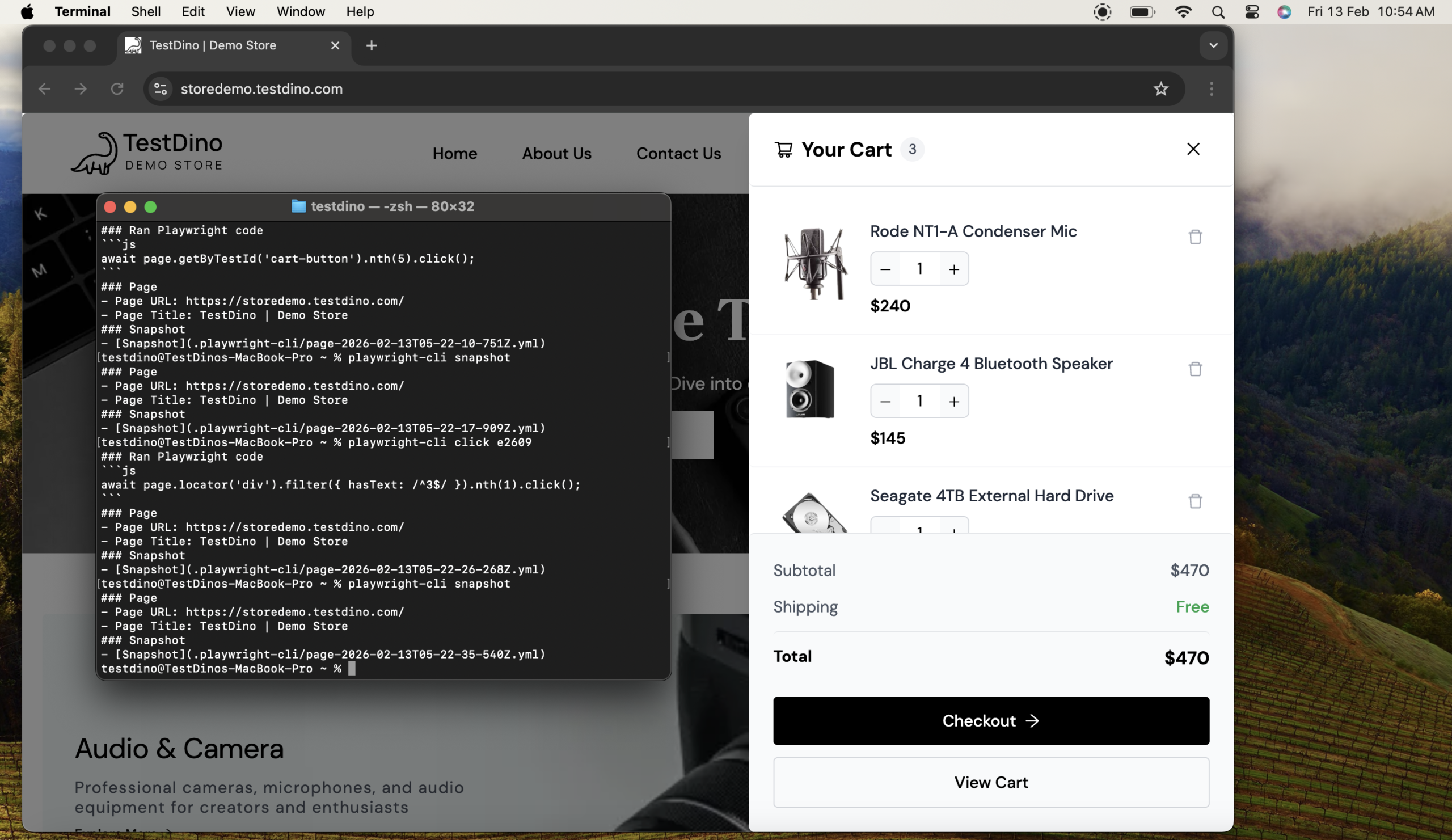

# Click on Product 1 using its reference ID from the snapshot

playwright-cli click e255

# Click on Product 2 using its reference ID

playwright-cli click e291

# Click on Product 3 using its reference ID

playwright-cli click e327

# Take another snapshot because the page state has changed (cart updated)

playwright-cli snapshot

# Click the Checkout tab using the latest reference ID

playwright-cli click e2609

# Final snapshot to confirm navigation to checkout and capture new elements

playwright-cli snapshot

# Close the browser

playwright-cli close

What’s happening here:

- open launches the site in a visible browser so actions can be observed.

- snapshot captures the current page and assigns compact element references like e2609.

- click interacts with elements using those references instead of selectors.

- Additional snapshots are taken only when the page state changes, keeping interactions reliable and token-efficient.

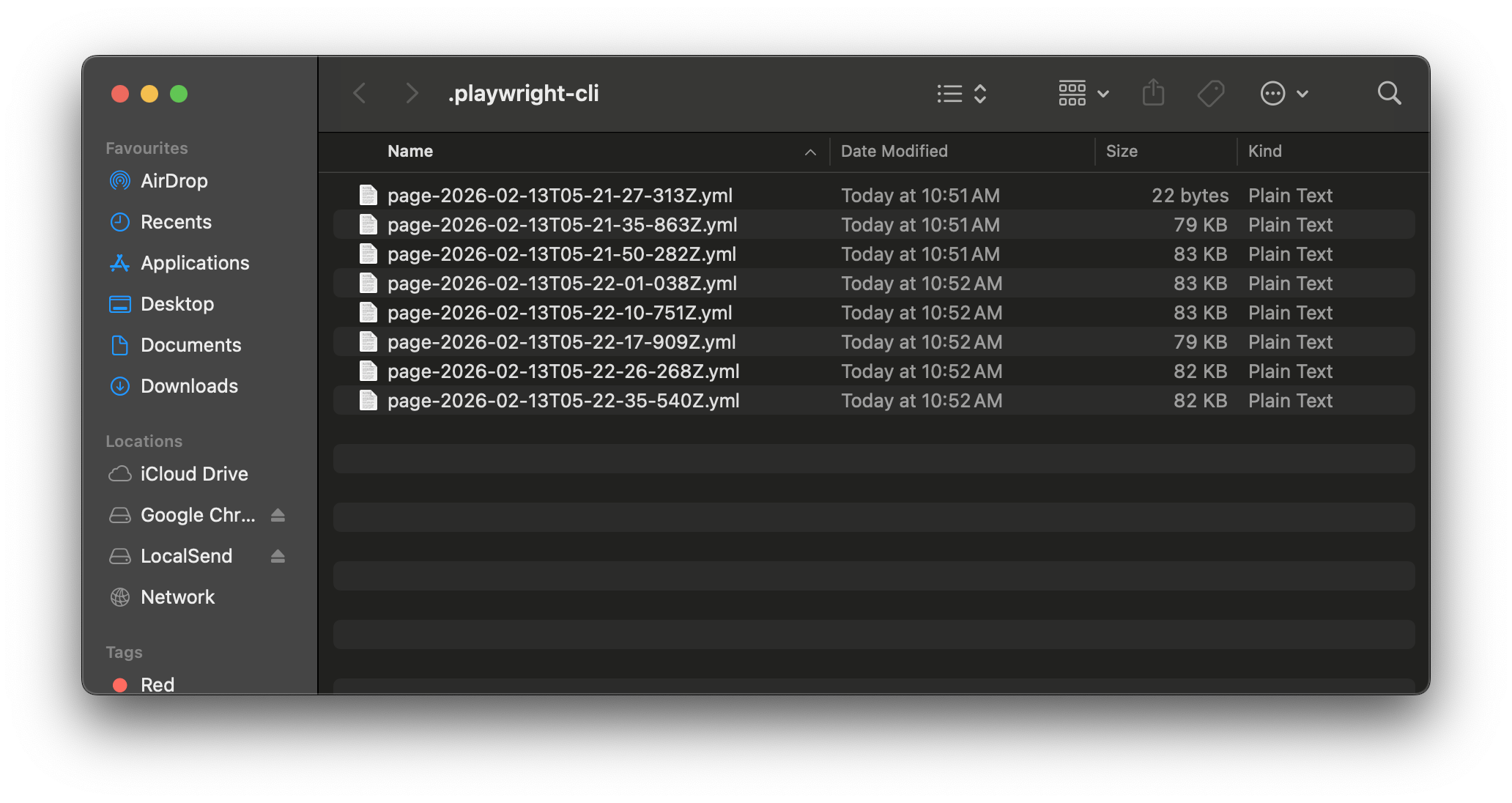

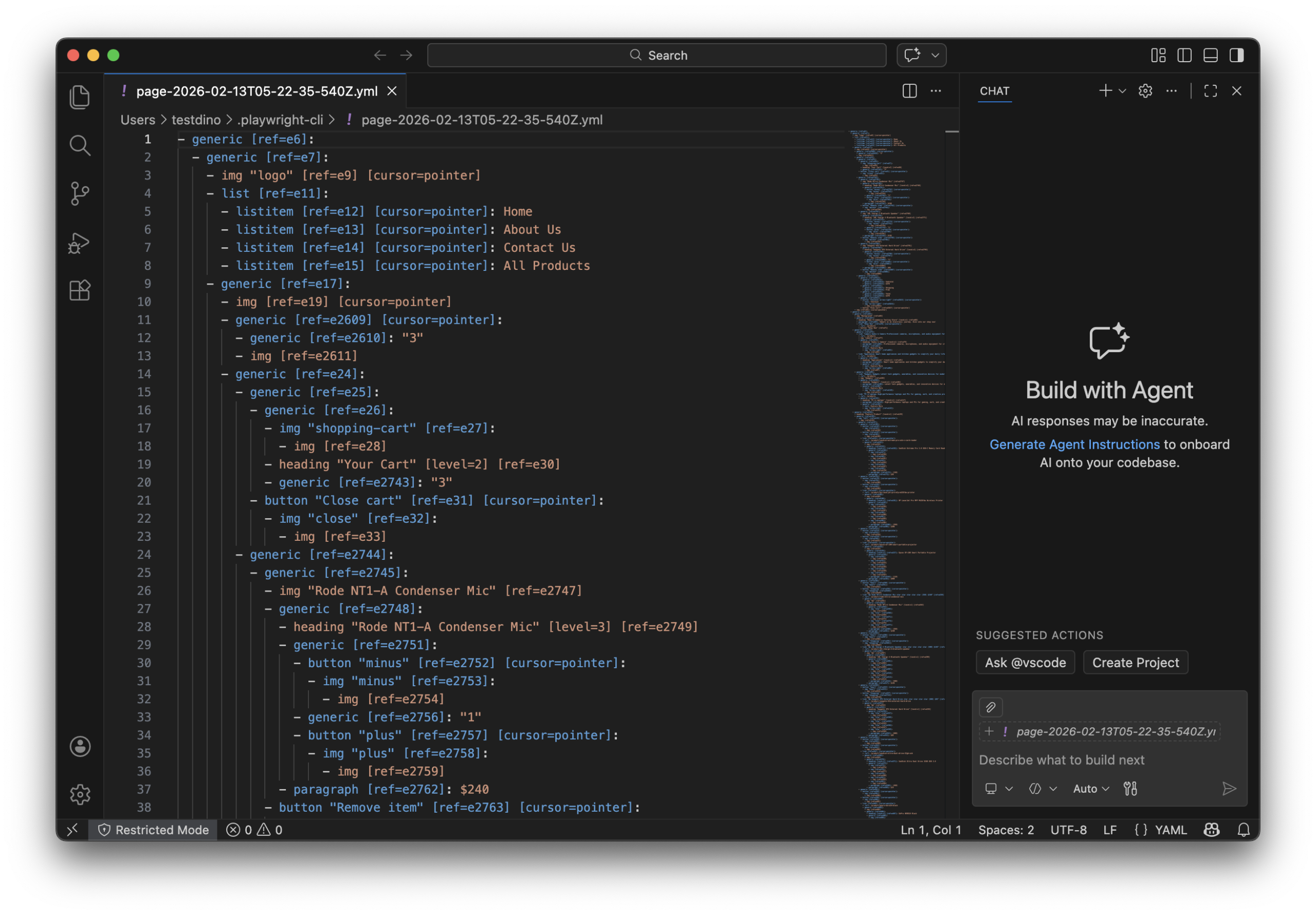

Where the YAML file comes in

As these playwright-cli commands run, the tool automatically records every interaction into a YAML file in the background.

This YAML file captures:

- The exact sequence of actions such as open, snapshot, and click

- The element reference IDs produced by each snapshot

- The page transitions between steps

Because the YAML is structured and deterministic, it can be:

- Replayed to reproduce the same browser flow

- Transformed into Playwright test code later

- Used by AI agents without re-scanning or reloading the page

In practice, this means the browser is explored once, interactions are logged cleanly, and automation can be reused or generated reliably without repeatedly consuming tokens or rebuilding context.

Why microsoft built a seperate playwright-cli

The new Microsoft playwright-cli exists for a very specific reason: token efficiency.

Traditional browser automation via MCP (Model Context Protocol) tools sends a massive amount of data back to the model on every interaction:

- Full accessibility trees

- Console logs

- Page structure metadata

- Tool schemas

After just a few interactions, the model’s context window fills up. The result?

- Slower reasoning

- Lost prior context

- Higher token costs

- Reduced reliability in longer sessions

The Microsoft playwright-cli flips this model.

Instead of pushing the entire browser state into the model’s context, the CLI keeps the browser state external and only returns exactly what’s needed.

This design is intentional and critical for modern AI-assisted development.

Token efficiency: CLI vs MCP (Why it matters)

To understand why the new Microsoft playwright-cli exists, you need to look at how browser control usually works for AI agents and where it breaks down.

MCP-based browser control

In an MCP-driven setup, every browser interaction sends a large amount of information back to the model.

For something as simple as:

"Click the login button"

The model typically receives:

- A full page snapshot

- The complete accessibility tree

- Console output

- Detailed element metadata

While this data can be useful, it comes at a cost. Each response may consume thousands of tokens, even when the action itself is trivial. After a few interactions, the model’s context window starts filling up with browser state instead of actual reasoning, code, or test logic.

Over time, this leads to:

- Higher token usage and cost

- Slower responses

- Loss of earlier context

- Reduced reliability in longer sessions

This approach works for short-lived interactions, but it doesn’t scale well for sustained AI-driven workflows.

playwright-cli browser control

The Microsoft playwright-cli takes a fundamentally different approach.

Instead of pushing the entire browser state into the model’s context, it keeps the browser external and only exchanges minimal, structured information.

A typical interaction looks like this:

- The agent requests a snapshot

terminalplaywright-cli snapshot - The CLI returns a concise list of element references (for example: e15, e21) rather than a full DOM or accessibility tree.

- The agent performs a precise action:

terminalplaywright-cli click e21

That’s it.

No DOM dump. No accessibility tree spam. No unnecessary metadata.

Just intent → action, with the smallest possible token footprint.

Why this difference is so important

By dramatically reducing what gets sent back to the model, playwright-cli allows AI agents to:

- Run longer browser sessions without losing context

- Allocate tokens to reasoning and code instead of UI noise

- Operate faster and more predictably

- Scale browser automation alongside large codebases

This token-efficient design is what makes the CLI especially well-suited for modern AI coding agents, where context windows are valuable and expensive.

CLI vs MCP: A clear comparison

| Feature | MCP Browser Tools | Microsoft playwright-cli |

|---|---|---|

| Token usage per action | Very high | Minimal |

| Accessibility tree | Always included | Not included |

| Context window pressure | High | Low |

| Suitable for long sessions | ❌ | ✅ |

| Designed for AI agents | ⚠️ Partial | ✅ Yes |

| Human debugging UX | Good | Decent |

| Deterministic actions | Medium | High |

| Cost efficiency | Poor at scale | Excellent |

When should you use each?

This isn’t an “either/or” decision. Each tool fits a different workflow.

Use the standard Playwright CLI when:

- You’re writing or debugging tests as a human

- You need rich reports (HTML, traces, videos)

- You rely on Playwright Inspector and codegen

- You’re integrating with CI/CD pipelines

- You want tools like TestDino to analyze failures over time

Use microsoft playwright-cli when:

- An AI agent is driving browser actions

- You’re running long reasoning sessions

- Token cost and context limits matter

- You need deterministic, low-noise interactions

- You want scalable automation without context collapse

How test reporting fits into this workflow

No matter how tests are written, generated, or explored, test results are where real engineering decisions happen. Execution alone is not enough as teams need to understand why tests fail, whether failures are real regressions, and which issues deserve immediate attention.

This becomes especially important as Playwright test suites grow.

The limits of default Playwright reports

The standard Playwright CLI already provides solid reporting options, including HTML reports, traces, screenshots, and videos. These are extremely useful when you’re debugging a single failure locally.

However, once a suite grows beyond a few dozen end-to-end tests, teams often run into the same problems:

- HTML reports become noisy and time-consuming to review

- Repeated failures look identical but may have different root causes

- Flaky tests hide real regressions

- CI pipelines surface what failed, but not why it failed

At this stage, test execution is no longer the bottleneck, failure triage is.

Where TestDino adds value

This is where tools like TestDino fit naturally into the Playwright workflow.

After running tests with the standard Playwright CLI, you upload the results:

npx tdpw upload ./playwright-report --token="YOUR_API_KEY"

Instead of presenting another static report, TestDino analyzes results across runs and applies AI-driven categorization to surface patterns that humans usually miss.

Specifically, it helps teams:

- Group failures by root cause, not just by test name

- Detect flaky behavior across multiple executions

- Highlight new regressions versus known, recurring issues

- Reduce time spent manually triaging CI failures

This is especially valuable in CI environments where developers may not have immediate access to local traces or full context.

How this complements both Playwright CLIs

The key idea is separation of concerns:

- Execution stays fast and deterministic

- Exploration stays lightweight and token-efficient

- Analysis becomes scalable and automated

This layered approach is what allows teams to move from running tests to actually learning from them, without drowning in raw data.

Conclusion

The Playwright ecosystem now offers two powerful but very different CLI experiences, each built for a specific purpose.

The standard Playwright Test CLI remains the foundation for writing, running, and debugging automated tests. It excels at test execution, rich debugging artifacts, and CI integration. This is where most teams will continue to spend the majority of their time.

The newer Microsoft playwright-cli, on the other hand, represents a shift toward AI-native tooling. By prioritizing token efficiency and minimal context exchange, it enables long-running and reliable browser interactions for modern AI coding agents.

When used together with intelligent reporting layered on top, these tools form a scalable workflow that supports fast execution, efficient exploration, and meaningful analysis. The key is not choosing one over the other, but understanding when each tool fits best.

FAQs

The Playwright CLI is used to run tests, install browsers, generate code, debug failures, and produce reports. It’s the primary interface developers use to interact with Playwright.

npx playwright is designed for test execution and human debugging, while playwright-cli is built for token-efficient browser control, mainly targeting AI coding agents and automated workflows

No. They solve different problems. The Playwright Test CLI is still essential for running tests and CI, while playwright-cli is best for AI-driven exploration and code generation.

AI models have limited context windows. Sending full DOMs and accessibility trees wastes tokens, reduces reasoning space, and increases cost. Token-efficient CLIs avoid this problem.

Yes, and that’s often the best approach. Use the Playwright Test CLI for execution and reporting, and playwright-cli for lightweight, interactive browser control.

Yes, especially for quick inspection, screenshots, and selector exploration. However, its biggest advantage shows up in AI-assisted and automated coding workflows.

Table of content

Flaky tests killing your velocity?

TestDino auto-detects flakiness, categorizes root causes, tracks patterns over time.